AI Algorithms Can't Predict Kindness: Why J. Cole's Rent Debt Move Breaks the Data

J. Cole turned a tenant's 3-year rent debt into a legendary moment that AI models can't predict. Here's why algorithms fail at measuring humanity, and what that means for the future of work and community.

YEET Magazine Staff, YEET Magazine

Published October 4, 2025

"A tenant couldn't pay rent for 3 years. His landlord let him live free anyway. J. Cole turned it into a legendary move—and proved that algorithms can't predict human kindness."

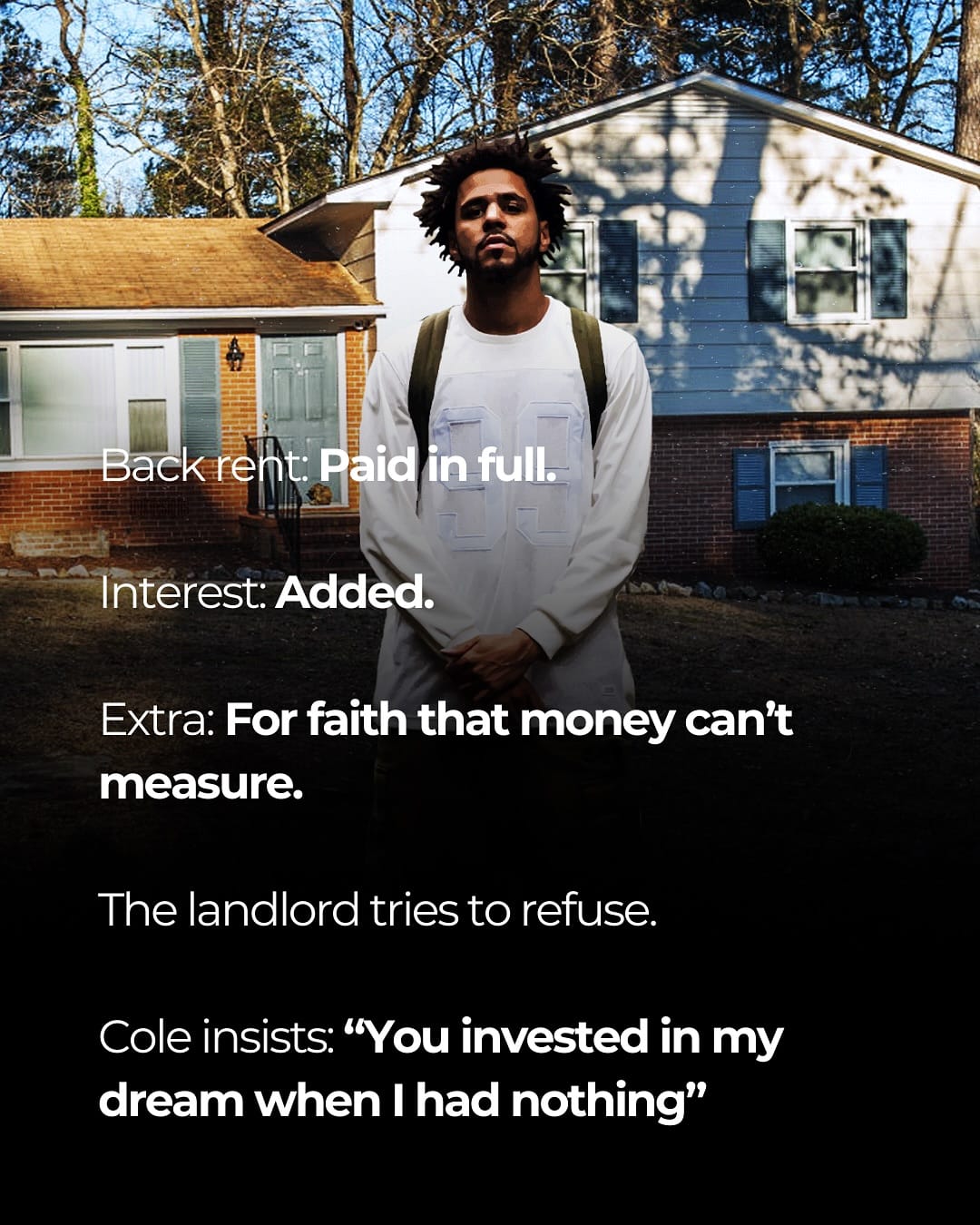

Here's what happened: A tenant couldn't pay rent for 3 years. By every algorithmic model—every debt-collection algorithm, every credit-scoring system, every predictive AI used by landlords—this should've ended in eviction. The data said: remove the tenant, maximize profit, move on. But the landlord ignored the algorithm. Instead, he let the tenant stay. Free. Then J. Cole heard the story and paid off years of back rent as a straight-up flex of humanity.

This moment breaks every automation assumption we have about how business works.

"It's about seeing someone's potential even when the world counts them out," J. Cole said. That's the thing: algorithms don't see potential. They see patterns. They saw a delinquent account. Data points. Risk factors. Numbers to optimize.

But a landlord saw a person. J. Cole saw a story worth elevating. Neither of those things compute in machine learning models.

Why Automation Can't Model Compassion

Eviction algorithms are real. They exist in thousands of rental management systems right now. They flag delinquent tenants automatically. They recommend eviction based on pure data. They optimize for the landlord's bottom line—which makes sense from a business automation standpoint, except it removes the human variable entirely.

The landlord could've used that system. Instead, he chose empathy. That's not a bug in human decision-making. That's the feature AI hasn't figured out.

What the Data Actually Missed

Every credit score, every risk algorithm, every predictive model said: this tenant is a loss. But the landlord had context. Maybe he knew the person. Maybe he saw the struggle. Maybe he believed in the possibility of change—something algorithms literally cannot compute because they're trained on historical data, not future potential.

J. Cole amplifying this story is important because it reminds us that AI automation and algorithmic decision-making have blind spots. Blind spots that cost people their homes.

When cities and landlords rely on eviction algorithms without human override, they're essentially outsourcing compassion to a machine. And that machine will never choose kindness over optimization.

The Future of Work: When Humans Beat the Algorithm

This is what we need more of in automation conversations. Not just "will AI replace jobs?" but "should AI make decisions that affect lives without human judgment?"

The future of work isn't about choosing between humans and algorithms. It's about humans using judgment to override algorithms when the moment calls for it. The landlord did that. J. Cole amplified it. And suddenly a story about debt becomes a story about why we still need people in the loop.

Questions You're Probably Asking

Q: Are eviction algorithms actually being used right now?

A: Yes. Companies like RentBureau and various property management systems use predictive algorithms to flag high-risk tenants. They feed data into decision-making systems that landlords use daily.

Q: Could an AI have predicted J. Cole would pay the rent?

A: No. That's not in any training dataset. Algorithms work on historical patterns. Acts of pure kindness don't fit data models because they're not driven by profit optimization or logical incentives.

Q: What's the AI takeaway here?

A: Algorithms are great at scaling decisions, but they're terrible at edge cases involving human judgment. This story is an edge case. It's why some decisions—especially those affecting people's homes—should always have human approval built in.

Q: Is the lesson "AI is bad"?

A: No. The lesson is "AI without human override is incomplete." The algorithm wasn't wrong to flag risk. It was incomplete because it couldn't factor in compassion, context, and the possibility of human growth.

Q: What does this mean for future work?

A: Workers and decision-makers need to stay in the loop. Automation should enhance human judgment, not replace it. This landlord's choice proves that.

What You Can Do Now

- Check if your community is using automated eviction algorithms—and if so, push for human review processes.

- Share stories like J. Cole's to remind people that kindness still matters in a data-driven world.

- In your own work, question whether you're following an algorithm or using your judgment. Sometimes the latter wins.

- Support organizations fighting algorithmic bias in housing and lending decisions.

Sources

- Rolling Stone – J. Cole Philanthropy

- The Verge – Eviction Algorithms and Housing Justice

- MIT Media Lab – Algorithmic Justice

- Complex – J. Cole Community Work