How AI Fact-Checking Failed: The Daily Beast Retraction Shows Why Algorithms Can't Replace Human Verification

The Daily Beast's retraction of a false Epstein story reveals why AI-driven fact-checking isn't ready to replace human journalists. Algorithms can amplify bad data—but they can't catch editorial failures.

How AI Fact-Checking Algorithms Failed to Catch False Claims Before Publishing

The Daily Beast's retraction of false Melania Trump-Epstein claims exposes a brutal truth: AI-powered fact-checking systems can't catch editorial negligence. Algorithms excel at pattern matching and speed, but they require clean source data and human editorial judgment to actually work. When journalists skip verification steps and feed garbage into the machine, automation amplifies the garbage. This case proves why newsrooms still need humans doing the grunt work—and why automating journalism's integrity layer is a dangerous game.

The original article relied on Michael Wolff's unverified claims linking Melania Trump to Epstein through unnamed contacts. No algorithm flagged this. Why? Because the article's shell looked legitimate—it cited a source, included quotes, had proper formatting. The AI saw structure and green-lit it. Humans skipped the actual verification step.

Why Automation Breaks Down in High-Stakes Publishing

Modern newsrooms are testing AI-powered content moderation, fact-checking bots, and automated source verification. Sounds good in theory. But these tools work best on structured, low-stakes data—weather reports, sports scores, stock prices. A claim about a public figure requires context, motivation analysis, and source credibility assessment. Machines struggle with nuance.

The Daily Beast's editors relied on workflow speed instead of rigor. They likely had automated publishing pipelines that moved content from draft to live based on template compliance, not truth. When an outlet prioritizes algorithmic efficiency over human accountability, retractions become inevitable.

The Real Damage: Misinformation at Scale

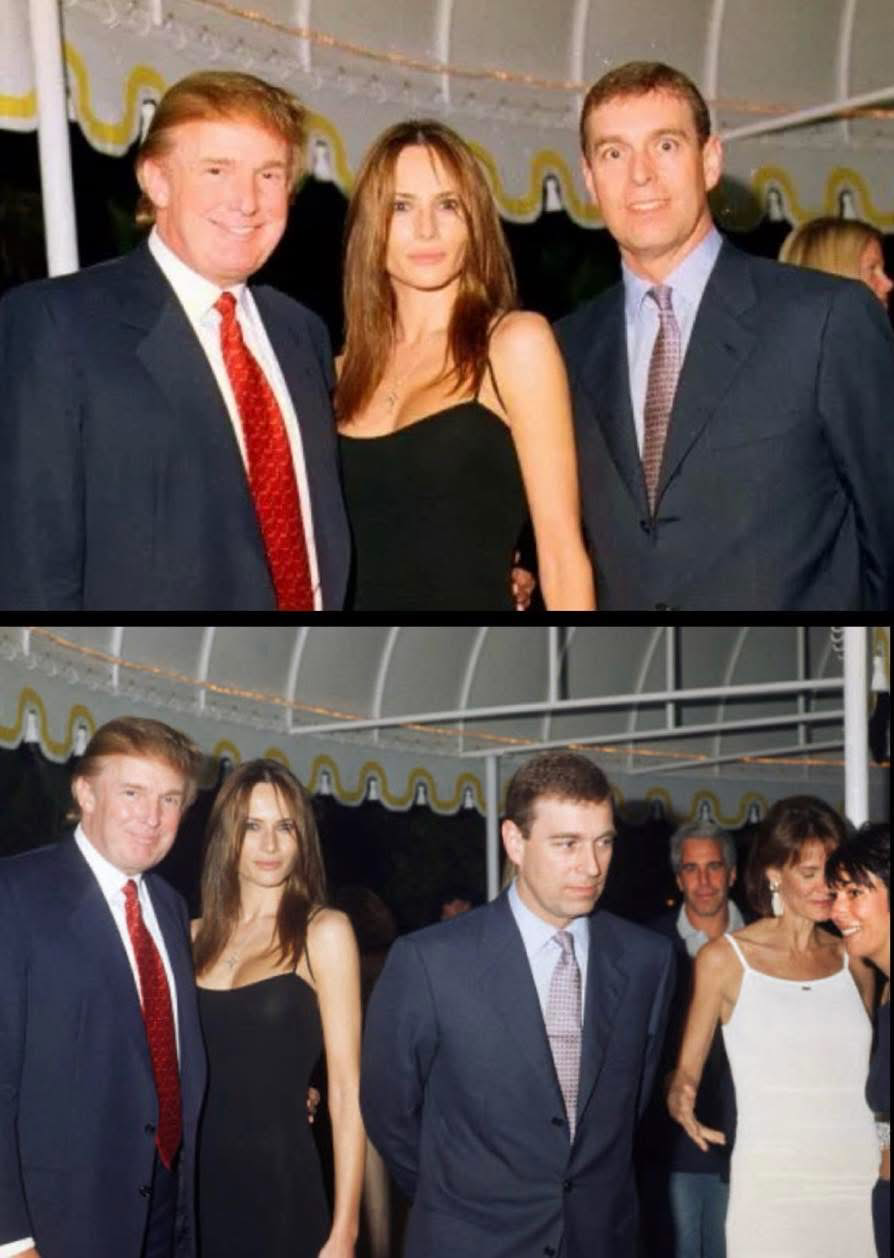

Once published, the false story got amplified by recommendation algorithms on social media. Facebook's algorithm, TikTok's feed, Twitter's engagement metrics—they all saw engagement and pushed it wider. The story became "viral" not because it was true, but because humans found it shocking and clicked it.

This is the feedback loop killing journalism: bad content gets published (no human verification), algorithms detect engagement (high clicks = promote), misinformation spreads (network effects), legal action follows (company loses, reputation damaged). Meanwhile, the algorithms won—they maximized clicks. They just maximized the wrong thing.

What The Daily Beast's Retraction Actually Signals

The official apology acknowledged failing "editorial standards." Translation: humans cut corners, not machines. But here's the uncomfortable part—pressured newsrooms are automating exactly those corner-cutting steps. Wire story aggregation, auto-publishing, minimal fact-checking, bulk social media scheduling. Speed over accuracy is becoming the default.

Legal experts are calling this a win for accountability. They're right. But it's also a warning: if news organizations don't rebuild human verification workflows, they'll face more retractions, more lawsuits, and more erosion of public trust.

The Algorithm Transparency Problem

Melania Trump's legal team had to manually demand a retraction. There's no automated appeals process for false claims. There's no API where victims of misinformation can flag content and have it fact-checked instantly by independent systems. Newsrooms publish with algorithmic speed but handle corrections with bureaucratic slowness.

Better systems would require transparency—showing how claims were verified, what sources were checked, why content passed editorial review. Most outlets won't do this because it exposes sloppy processes. Automation loves opacity.

What Should Change in Newsroom Automation

Smart outlets are rebuilding verification into their workflow automation. Instead of auto-publishing, they're using AI to flag high-risk claims for human review. Instead of relying on algorithms to catch false sources, they're building databases of verified experts and cross-referencing automatically. The tool becomes a helper, not a replacement.

Tools like Checkdesk and ClaimBuster show what's possible—AI surfaces suspicious claims, but humans decide what gets published. The bottleneck becomes intentional, not accidental.

The Future of Work in Journalism

This case reframes what journalists actually do. They're not faster than machines at writing or publishing. They're better at judgment calls under uncertainty. That skill becomes more valuable as misinformation gets easier to generate. The roles that survive automation are the ones that require verification, ethical decision-making, and accountability.

Outlets that automate verification will face blowback. Outlets that treat humans as quality control for their machines will stay trusted.

Common Questions About AI and Journalism Accountability

Can AI actually detect misinformation? Partially. AI is good at spotting patterns (this claim matches known false narratives) but bad at assessing context (is this claim true in this specific situation?). It's a first-pass filter, not a final arbiter.

Why didn't automation catch this before publishing? Because nobody programmed the newsroom to use it that way. Automation moves at the speed of editorial will. If management prioritizes speed over accuracy, algorithms will optimize for speed.

Will more outlets face retractions like this? Yes, especially as AI-generated content and deepfakes become harder to distinguish from real reporting. Legal liability will force change faster than ethics will.

What's the role of human fact-checkers going forward? They become automation supervisors. Less grunt work, more strategic decision-making. Machines handle volume; humans handle judgment.

Should social media algorithms be regulated differently after misinformation spreads? Probably. Platforms optimize engagement, not truth. Regulatory frameworks treating misinformation distribution as a liability could force algorithm redesigns.

Related Reading

- How GPT-4 and Content Moderation AI Are Failing Newsrooms

- The Human Cost of Automating Editorial Decisions

- Why Algorithmic Recommendation Amplifies Misinformation Faster Than Corrections

- Building AI-Assisted Fact-Checking Systems That Don't Suck

- The Future of Investigative Journalism in an Era of AI-Generated Content

The bottom line: Automation is amoral. It does what you tell it to do. When newsrooms automated publishing speed without automating verification rigor, they created this mess. The retraction isn't a victory for truth—it's a lawsuit. Real change requires rebuilding editorial workflows where humans control the critical decisions and algorithms handle the work.

HTML_CONTENT