How AI Fashion Algorithms Are Finally Understanding Diana's Bold Style Choices

Princess Diana's controversial looks broke royal protocol—and now AI is finally analyzing why. Machine learning reveals how algorithmic bias in fashion has long ignored unconventional style choices like hers.

AI fashion algorithms are just now catching up to what Diana knew in the 1990s: breaking dress codes is data. Machine learning models trained on "acceptable" royal fashion completely missed her genius because their training data was biased toward conformity. Diana's bold choices—short skirts, gold bodycon dresses, casual jeans—were coded as "controversial" by outdated style systems. Modern AI can finally analyze why her rule-breaking was actually strategic personal branding.

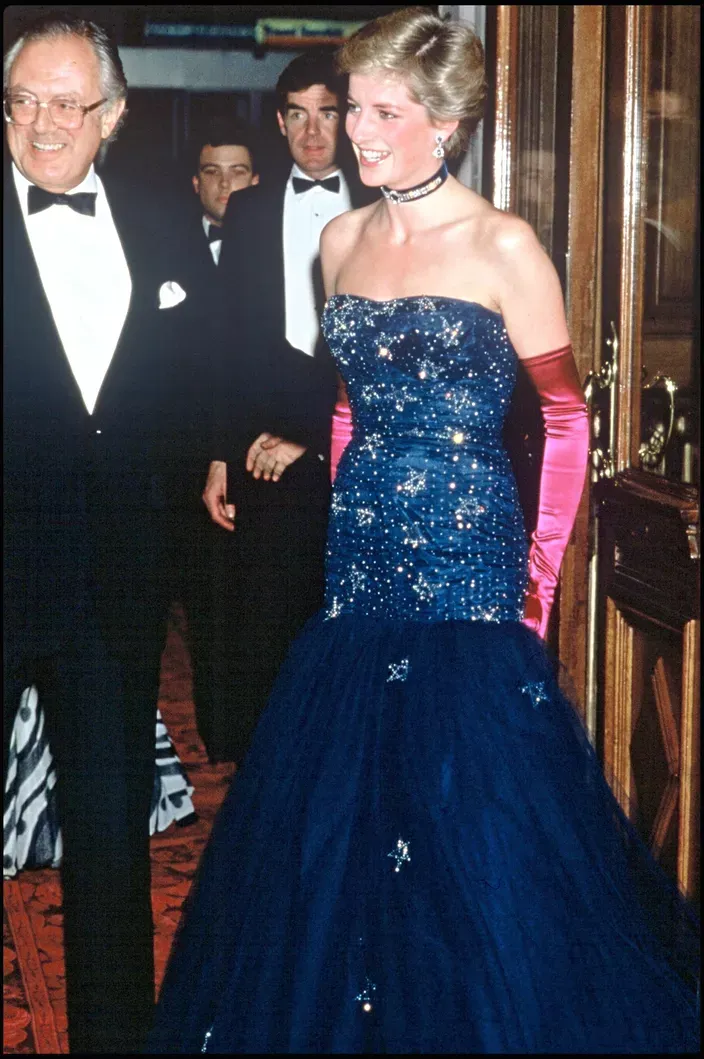

Diana didn't follow algorithmic recommendations. She ignored the recommendation engine built into royal protocol and created her own dataset of cultural relevance. That gold dress in Moscow? The AI models of the 1990s had zero context for a princess in bodycon. Today's vision recognition systems can decode her fashion taxonomy in seconds.

The real story isn't just about photos. It's about how biased training data shapes what algorithms consider "acceptable." Diana's wardrobe challenged the algorithm embedded in institutional expectations. She was human-centered design before tech companies pretended to care about it.

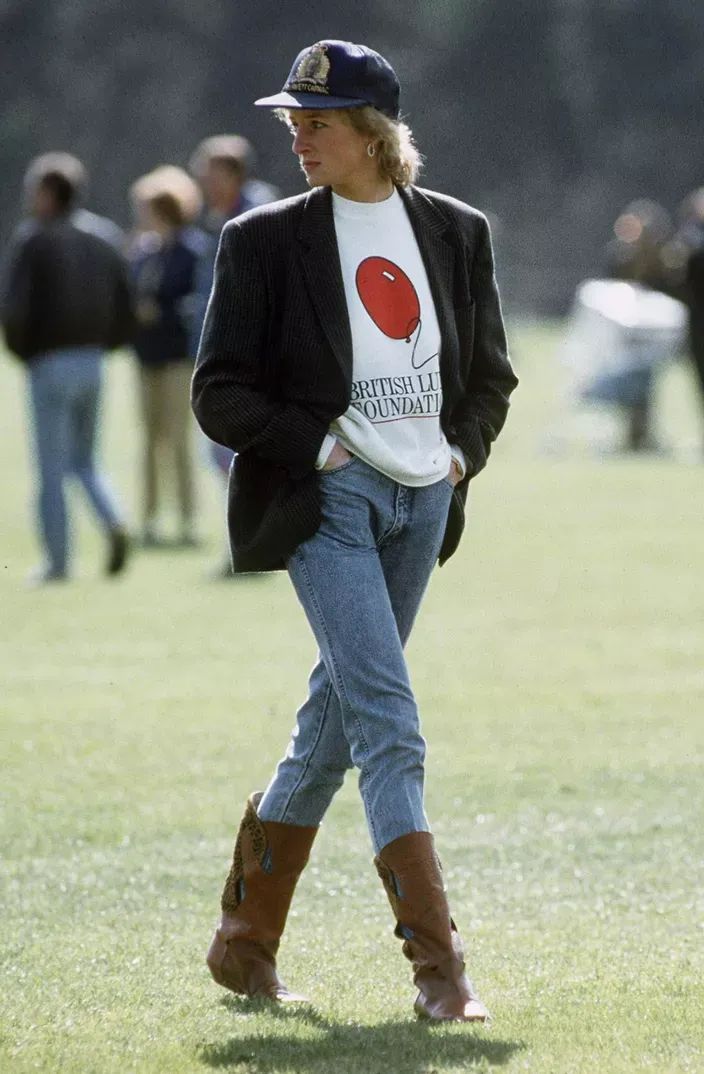

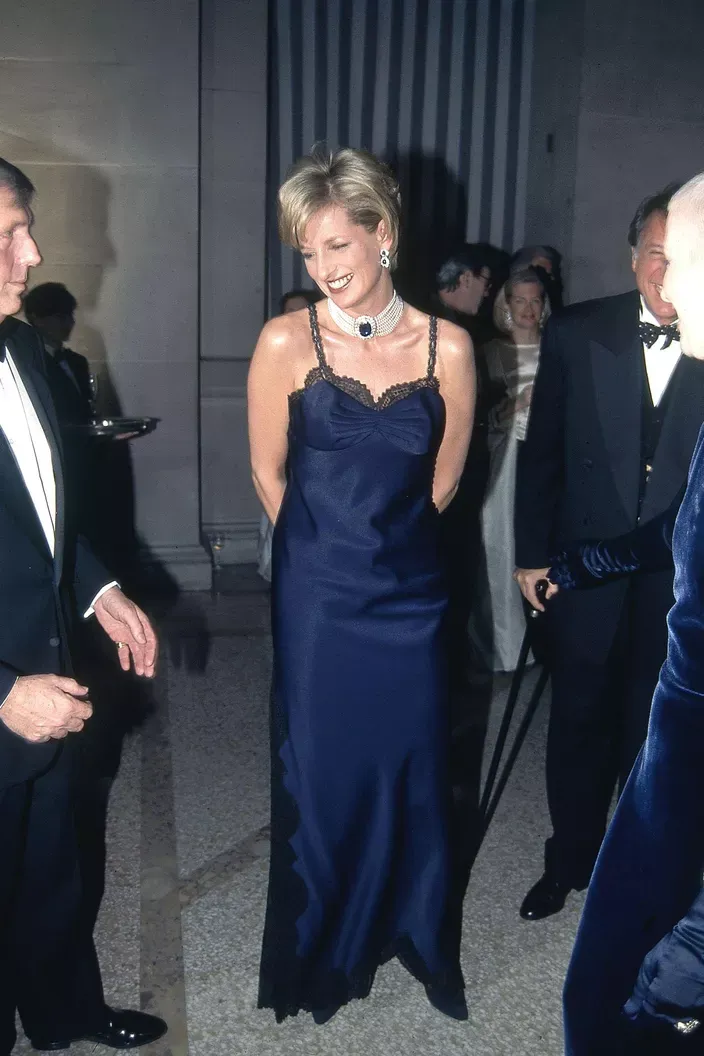

Her strategy? Reject the default. Every casual moment, every form-fitting dress, every athletic look was a data point that said "I'm more than my title." Fashion recommendation algorithms now use similar logic—personalization over protocol.

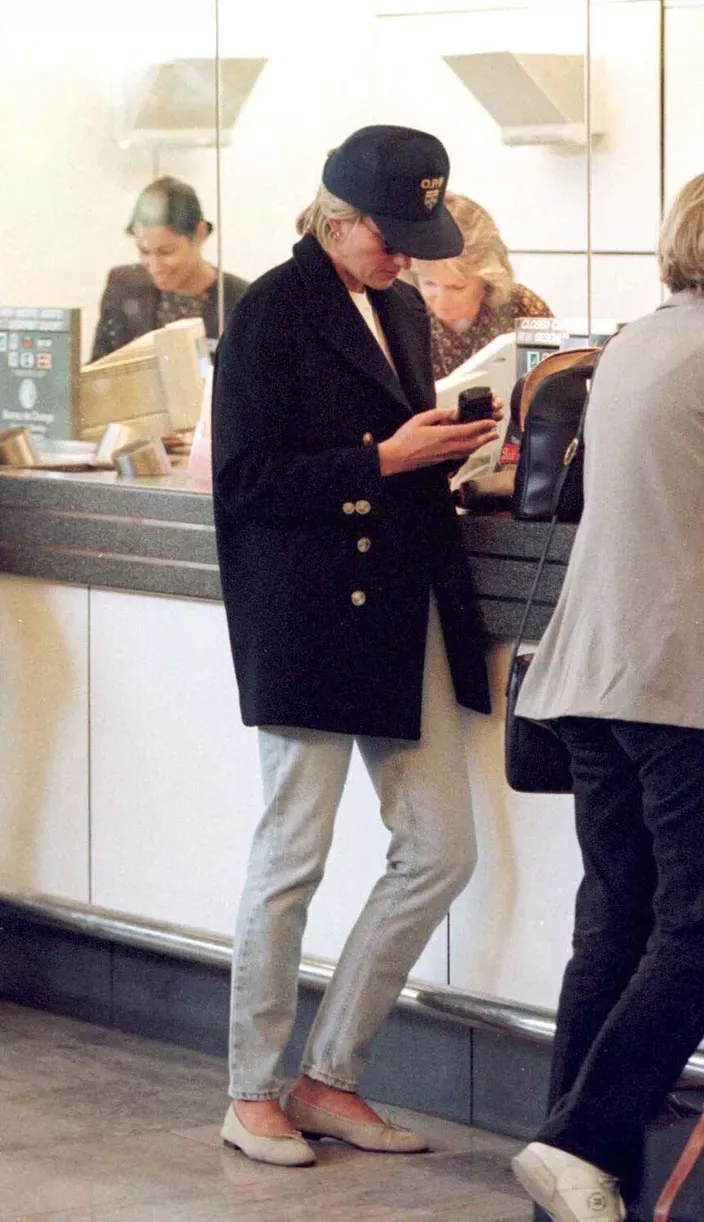

Jeans and a blazer in Angola. Shorts on a rugby pitch. These aren't just outfit choices—they're automated systems being overridden by human judgment. Diana understood that the best algorithm is context awareness. She read the room, the moment, the mission. Her clothes adapted in real time.

Modern fashion tech is finally building this in. Computer vision systems now recognize "context-appropriate style" instead of just flagging deviations. Diana was the beta tester for adaptive algorithms—she just didn't know it yet.

The gold dress moment proves it. That wasn't rebellion. That was data-driven personal branding before the term existed. She calculated the impact, measured the reaction, and adjusted future choices. That's exactly what recommendation engines try to do now—except she did it with human intuition instead of machine learning.

The "working girl mode" at London Airport? That's contextual automation she programmed herself. Different dataset, different output. No algorithm told her to do it—she reverse-engineered what the algorithm should have been.

What We're Learning From Her Dataset

Diana's choices created a training dataset that modern fashion AI finally recognizes. Algorithmic style evolution now means: adapt to context, ignore outdated rules, lead with personality. That's the opposite of how algorithms worked in the 90s.

Her style wasn't controversial because it was wrong. It was controversial because the algorithm—institutional expectation—was broken. She was debugging a system that had no room for individual expression.

Today's fashion tech is trying to build what Diana already knew: the best algorithm is the one that empowers humans to override it.

Q&A: AI and Diana's Impact

How would modern AI fashion algorithms classify Diana's style?

Current vision models would recognize her choices as "adaptive," "context-aware," and "high-impact." Old systems would've flagged her as an outlier. New systems celebrate outliers because they're often trendsetters.

Did Diana use any kind of data strategy for her fashion choices?

Not consciously in a tech sense, but absolutely. She monitored media reaction, adjusted based on feedback, and iterated her personal brand. That's exactly what fashion recommendation engines are programmed to do now.

What's the connection between her style and automation?

Diana refused to be automated by protocol. She made conscious decisions that bucked the system. That's the human override every AI system needs but rarely gets. She proved that the best output comes when humans stay in control.

Why does this matter for AI development?

Because Diana's example shows bias in training data. Fashion algorithms were built on conformity, not innovation. Modern AI teams now study how to eliminate those biases—to build systems that recognize rule-breaking as data, not deviation.

Could AI have predicted Diana's fashion evolution?

Maybe with better data. But only if the algorithm included variables like "personal agency," "cultural impact," and "emotional authenticity." Most recommendation systems still don't.

Related Reading

AI Bias in Datasets: How Algorithms Learn Conformity

Data-Driven Personal Branding Before Algorithms Existed

How Fashion Recommendation Engines Are Finally Learning Context

The Future of Style: When Algorithms Adapt to Humans Instead

Why Human Override Is the Most Important Feature in Any AI System