AI Image Stacking Reveals Hidden Worlds: How Algorithms Transform Musical Instrument Photography

Photographer Charles Brooks uses thousands of AI-processed focus-stacked images and algorithmic compositing to reveal the hidden architecture inside Stradivarius violins and Steinway pianos. His work shows how automation and computational photography are transforming how we document craftsmanship.

By YEET Magazine Staff, YEET Magazine

Published November 1, 2025

AI Image Stacking Reveals Hidden Worlds: How Algorithms Transform Musical Instrument Photography

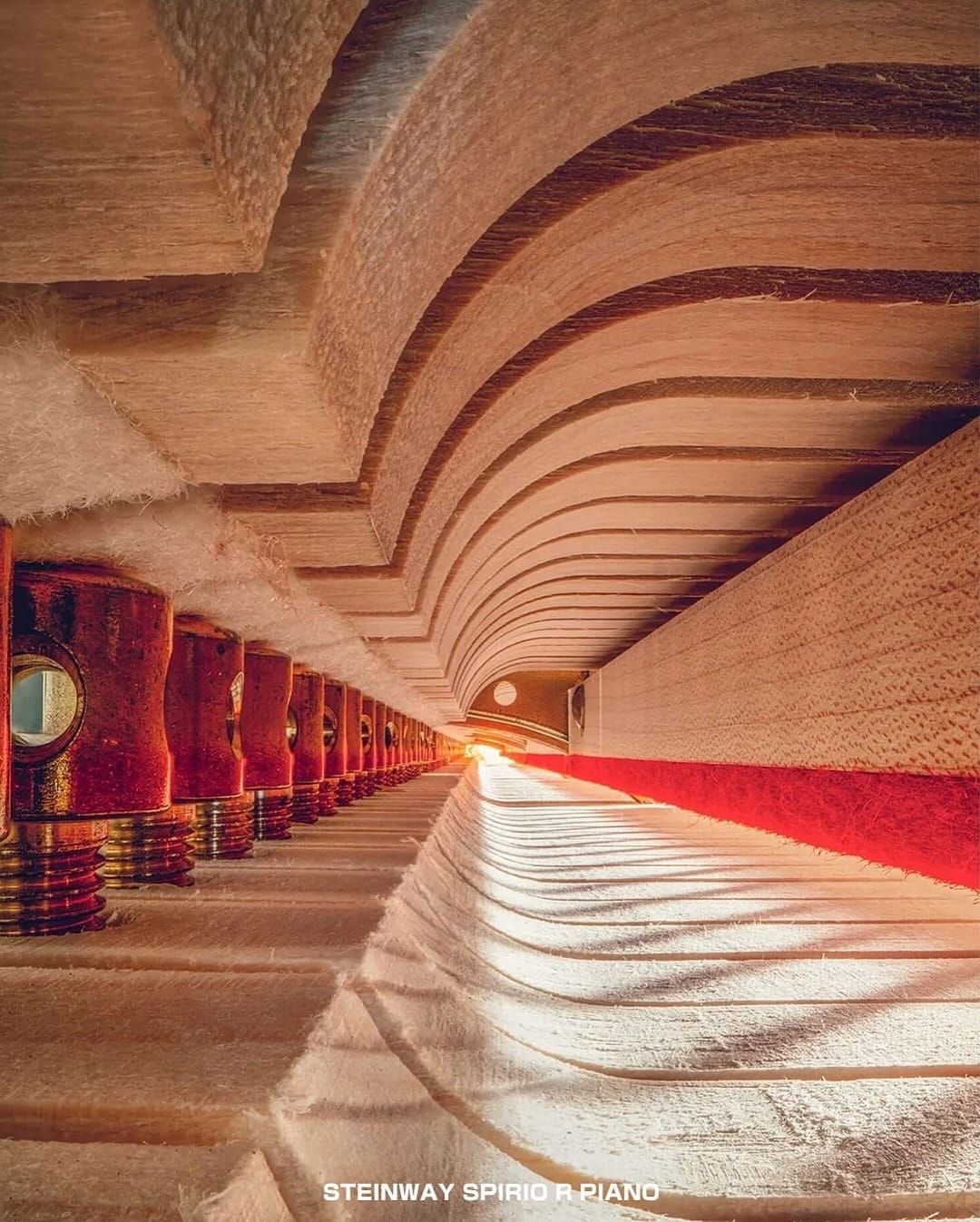

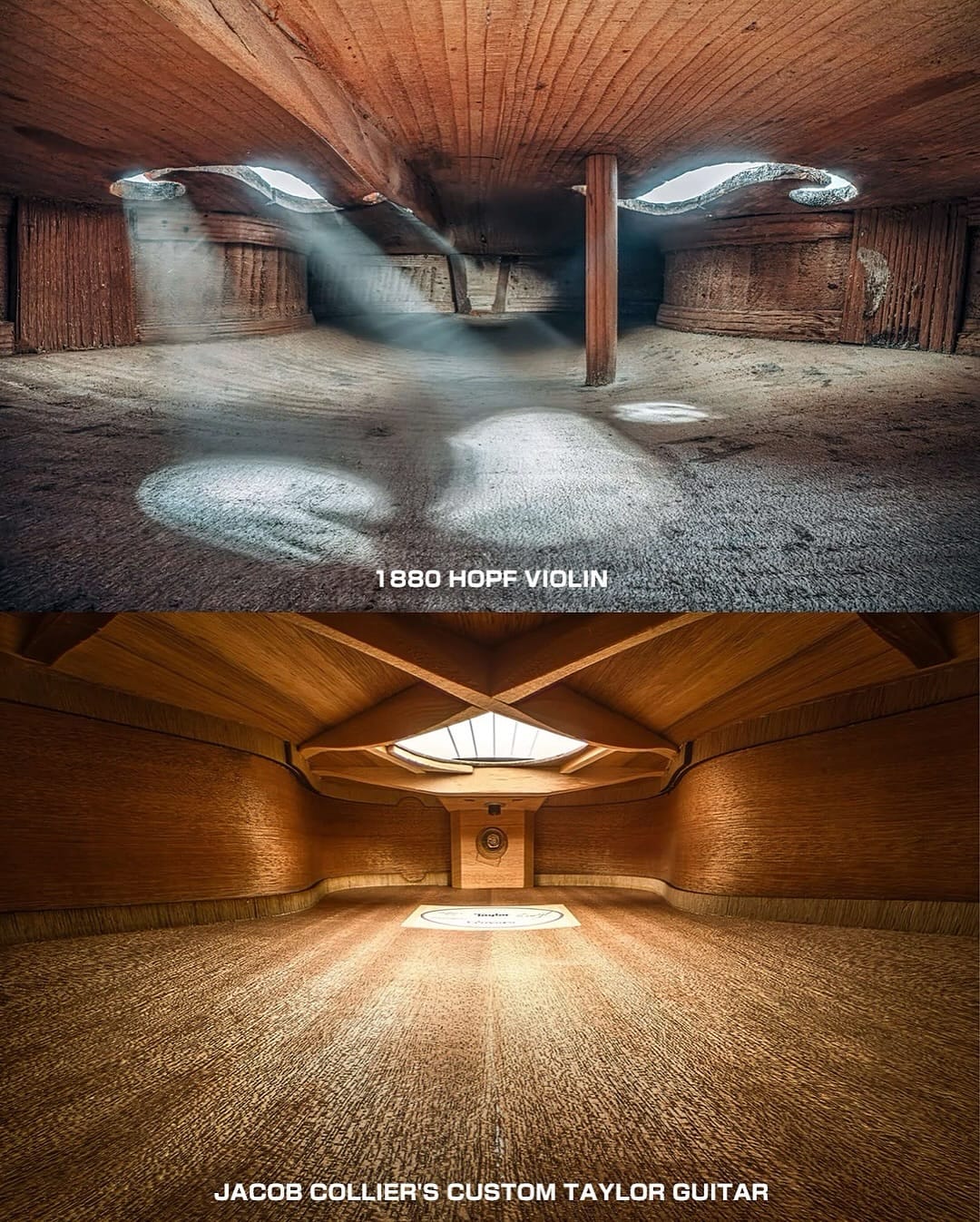

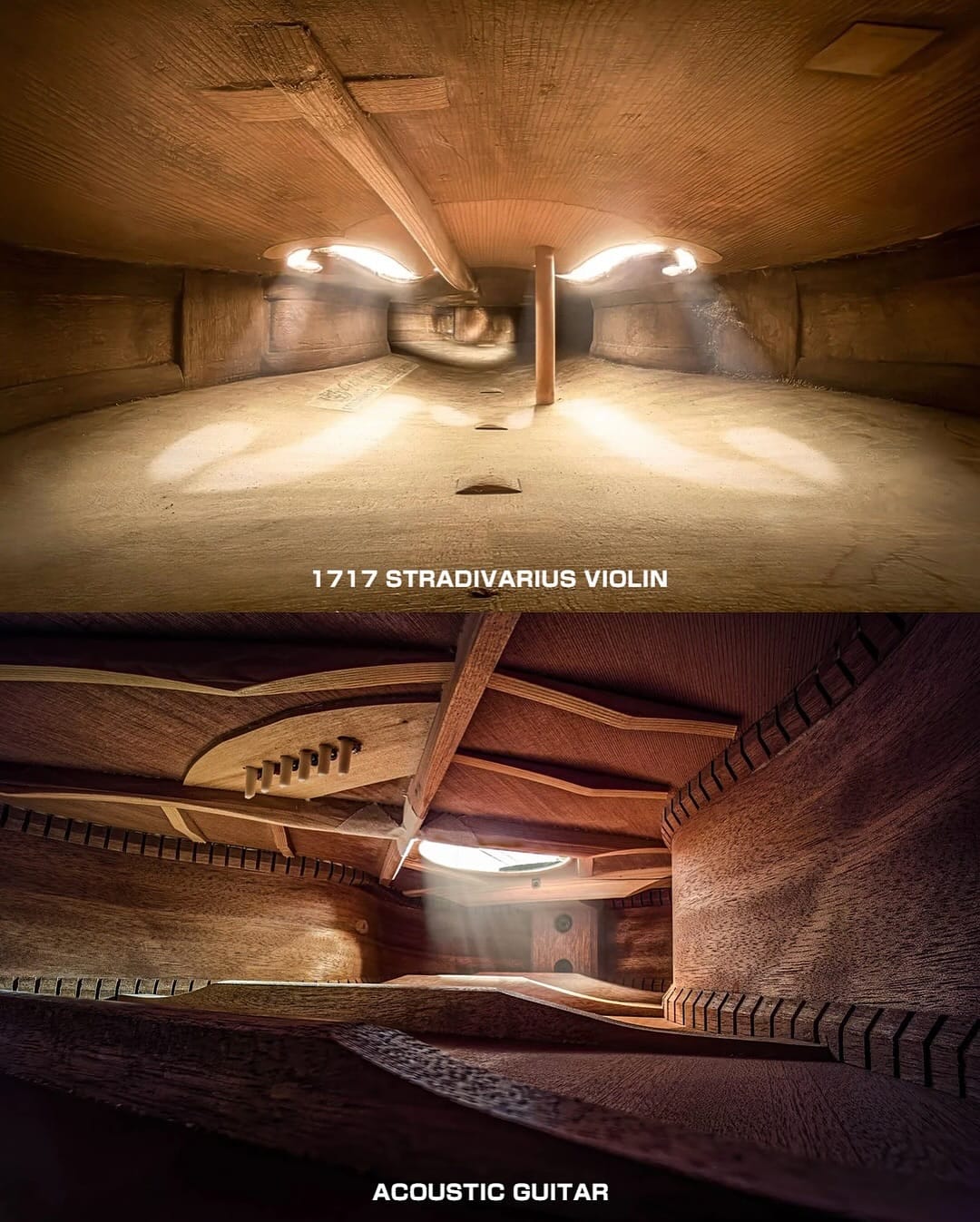

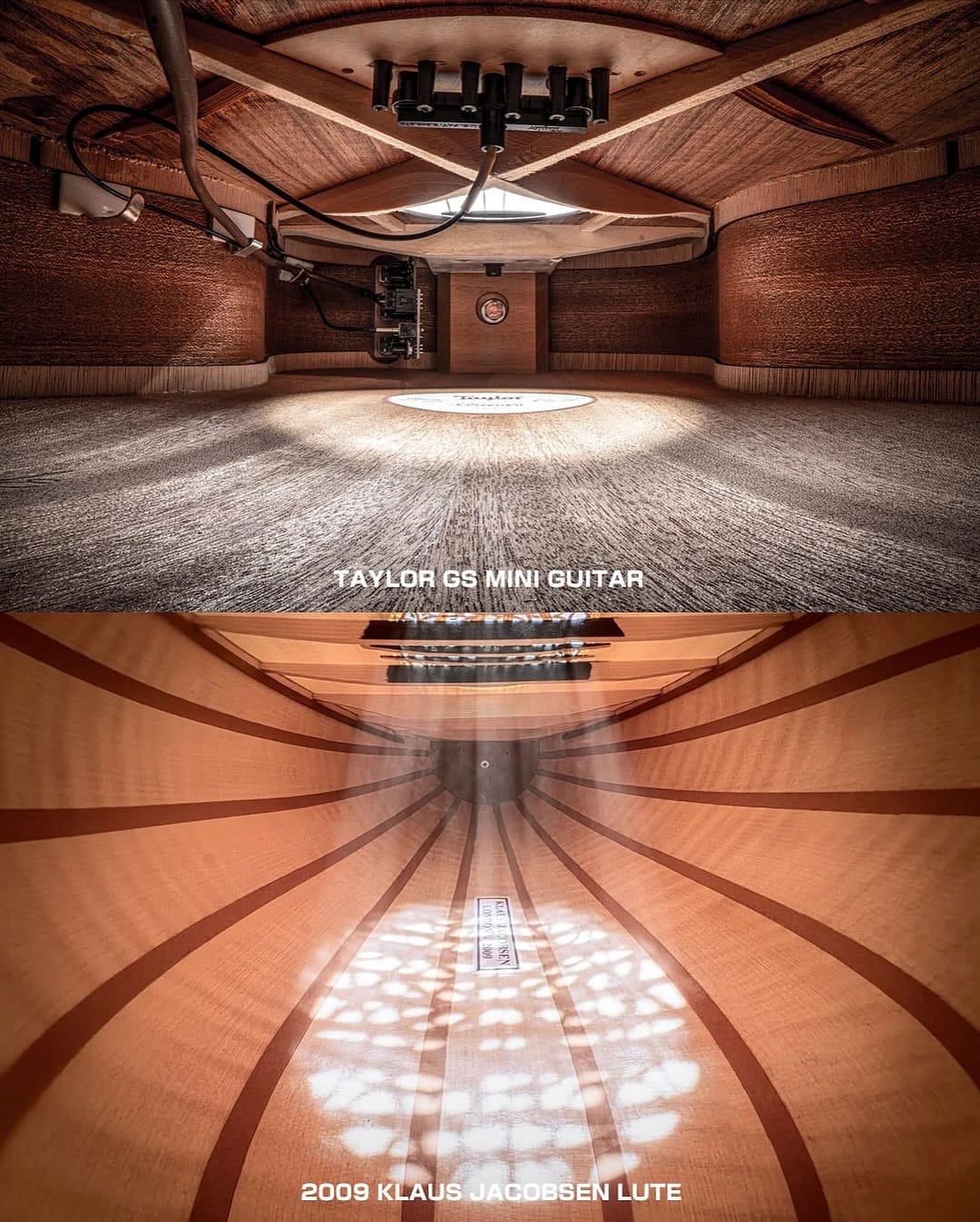

Australian cellist and photographer Charles Brooks (@charlescellist) has weaponized computational photography to unveil the unseen. Using focus-stacking algorithms, endoscopic lenses, and thousands of automatically composited images, Brooks transforms the interiors of Steinway pianos, Stradivarius violins, flutes, and cellos into vast, cathedral-like architectural spaces. His series, Architecture in Music, proves that AI-assisted image processing isn't just for tech startups—it's revolutionizing how artists document centuries-old craftsmanship.

His series has captivated audiences worldwide, showing that even within the silent walls of an instrument, a whole world exists—one that algorithms can finally make visible.

"It's like walking inside sound," Brooks told YEET Magazine. "These are not abandoned spaces. They're living — every mark, every layer of dust, every scratch carries the story of the people who played them. Technology lets us see that story."

How Focus-Stacking Algorithms Reveal Hidden Details

Brooks' technique relies on computational focus stacking—a process where thousands of images shot at different focal depths are automatically blended by algorithms to create perfect sharpness across the entire composition. This automation makes it possible to see details that no single human eye could ever capture.

Inside one Stradivarius violin, the algorithm revealed what looked like ancient vaults, arched and ribbed like Gothic architecture. In a Lockey Hill cello, the stacked images peered up into the grain of the maple back, lit like a golden chapel.

Each final photograph is a composite of hundreds to thousands of algorithmically aligned shots, processed through computational imaging software that automatically calculates depth maps and merges layers. The results blur the line between science, art, and data visualization.

Endoscopic Tech & Automation Changing Art Documentation

Brooks' technique repurposes endoscopic imaging—technology originally designed for medical and industrial inspection—combined with automated image processing pipelines. The narrow scope navigates tight instrument interiors, but it's the algorithms that actually make the magic happen.

Without focus-stacking automation, these images would be impossible. The computational power required to align and blend thousands of micro-images in real-time represents a shift in how artists collaborate with AI.

"I wanted to show the architecture that sound lives in. The inside of a cello looks like a cathedral because it is—it's a space built for resonance. But you need data, you need computational power, to reveal it."

His work has appeared in The Guardian, Smithsonian Magazine, and major design blogs, sparking conversations about how computational photography is democratizing access to details once reserved for instrument restoration labs.

The Future: AI Authenticating Instruments

Brooks' work hints at a larger future: as these imaging datasets grow, machine learning algorithms could be trained to authenticate instruments, detect counterfeit Stradivarius violins, or predict instrument degradation. The interior surfaces he's documenting are becoming training data for AI restoration and conservation.

Museums and collectors are already exploring how computational analysis of instrument interiors—the wood grain patterns, repair signatures, historical modifications—could be catalogued and analyzed by neural networks.

Questions People Actually Ask

How many images go into one Brooks photograph?

Anywhere from 500 to 5,000+ individual shots, depending on the instrument's complexity. Stacking software automatically aligns and blends them into a single composite.

Could AI completely automate this process?

Not yet. Brooks still controls composition, lighting, and creative direction. The algorithm is a tool, not a replacement—similar to how Photoshop enhanced photography without replacing photographers.

Are these images being used for conservation?

Yes. Museums are beginning to request Brooks' archives for condition assessment and historical documentation. The high-resolution data is becoming part of permanent conservation records.

What software does Brooks use?

He combines endoscopic camera equipment with Helicon Focus, Zerene Stacker, and custom Python scripts to process the image stacks. No off-the-shelf "AI" app—this is bespoke computational imaging.

Could this work be replicated by AI without human photographers?

Theoretically, autonomous robotic systems could navigate instruments and capture images. But artistic intent—deciding what's worth seeing—still requires human vision.

More on AI & Creative Tech

How Machine Learning Is Revolutionizing Music Production and Composition

Computational Art: The Rise of AI-Assisted Photography and What It Means for Artists

Inside the Algorithm: How AI is Being Used to Authenticate and Restore Historical Artifacts