AI Pet Translators Are Coming—Here's What It Means for Your Relationship With Animals

Researchers are using AI and machine learning to decode pet vocalizations and body language into human-readable emotions. But true pet-to-human conversation is far more complicated than just translating sounds.

AI Pet Translators Are Coming—Here's What It Means for Your Relationship With Animals

By YEET Magazine Staff — October 18, 2025

Your dog stares at you for ten seconds straight, tail wagging. Your cat meows three times at 2 a.m. What if AI could actually tell you what they mean? Researchers are developing machine learning systems to translate animal vocalizations and body language into human language by analyzing thousands of hours of recordings and contextual behavioral data. The technology could transform pet care, veterinary treatment, and even animal rights—but it also raises serious ethical questions about what happens when animals have a voice we can finally understand.

How AI is learning to decode animal communication

Recent breakthroughs in machine learning and bioacoustics have made it possible to analyze animal sounds the same way we process human speech.

By feeding AI models thousands of hours of recordings of dogs barking, cats meowing, and birds singing—along with contextual data about their behavior—scientists are teaching algorithms to recognize patterns that link specific sounds to specific emotions or needs.

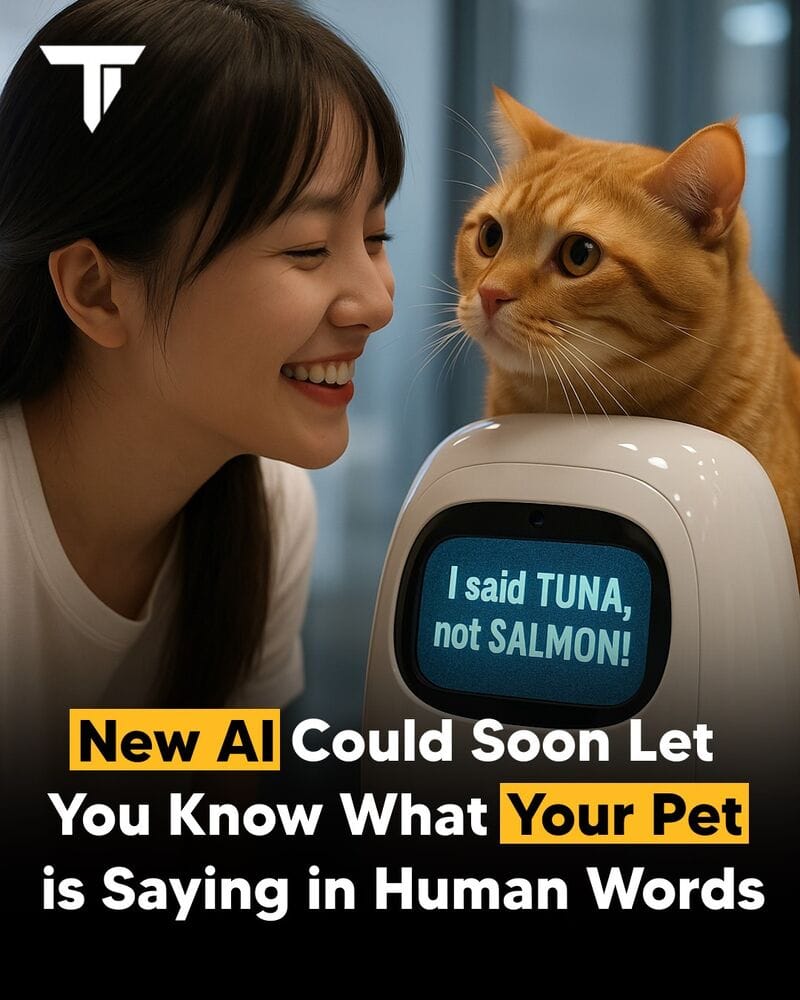

Researchers at the University of Tokyo have developed a machine learning model that can detect a dog's emotional state (happy, angry, anxious) based solely on the tone and rhythm of its bark. Meanwhile, startups like Zoolingua and Petpuls are already testing early "AI pet translator" devices that promise to interpret basic emotions through sound analysis.

This isn't just about curiosity. If AI can help humans better understand what animals feel, it could reshape pet care, veterinary treatment, and even animal rights advocacy.

Why AI pet translation is harder than it sounds

Here's the catch: language is more than just sound. Humans use syntax, culture, and shared meaning—things animals don't have in the same way.

Even if an AI can tell you that your cat's meow means "feed me," it's not the same as actual conversation. What we're really getting is a translation of emotional intent, not literal speech.

Context matters too. A growl could mean play in one situation and aggression in another. While AI can interpret patterns across thousands of data points, it can't always capture nuance—the reason your particular dog is barking at 3 a.m. depends on information no algorithm has access to.

So while we may be getting closer to hearing what animals are "saying," true understanding still requires human intuition and familiarity with individual animals.

The ethical minefield of AI animal communication

If AI could really translate animal emotions, the consequences would be profound.

Imagine learning that your dog feels lonely while you're at work. Or that your cat hates the new food you bought. It could bring pet owners closer to their animals—but it could also introduce guilt, responsibility, and moral dilemmas you weren't prepared for.

Would you still take your dog on a plane if the AI told you it's terrified? Would farms or zoos operate the same way if animals could express fear or pain in words humans could understand?

Animal-rights activists believe that AI animal communication could force a cultural shift, pushing society to view animals not just as companions or resources, but as sentient beings with voices worth hearing. That's a massive shift—one that could reshape industries from agriculture to entertainment.

What happens next?

We're still in early days. Current AI pet translators work best with clear, strong emotional signals—a dog's aggressive bark or a cat's pain meow. Subtle emotions, complex needs, and context-dependent communication are still beyond what these algorithms can reliably decode.

But the technology is improving fast. Within 5-10 years, we could have consumer-grade AI devices that give fairly accurate emotional readings of our pets. Whether that's a good thing depends on whether we're ready for what our animals have to say.

Common questions about AI pet translation

Can AI really understand what animals are thinking? Not yet. Current systems decode emotional intent from sounds and body language, but they're interpreting data patterns, not reading minds. There's a big difference between "this bark sounds aggressive" and "your dog is thinking about aggression."

When will AI pet translators be available to consumers? Early versions are already being tested. Expect basic emotion-reading apps and devices within 2-3 years, though accuracy will vary by species and individual animals.

Could AI pet translators make us better pet owners? Possibly. Understanding your pet's stress levels or health issues earlier could improve care. But it could also create anxiety or guilt if you learn your pet is unhappy with something you can't fix.

What about wild animals? Scientists are also using AI to decode vocalizations in endangered species like whales and elephants. This could transform conservation efforts by helping us understand animal behavior without invasive observation.

Will this change animal rights laws? If AI can demonstrate that animals have complex emotional lives, it could strengthen arguments for stricter animal welfare regulations. Some legal scholars are already discussing how AI-translated animal "testimony" might factor into future animal protection laws.

Read next

Check out our piece on how AI is automating veterinary diagnostics to see how machine learning is reshaping pet healthcare in other ways. Or explore the ethics of using algorithms to monitor animal welfare to dive deeper into the moral questions surrounding animal surveillance tech.

HTML_CONTENT