How AI and Data Analytics Exposed the Diddy-SBF Prison Connection: A Tech Investigation

AI and data analytics platforms played a surprising role in uncovering connections between high-profile cases in 2024. We break down how algorithms spotted patterns humans almost missed and what this means for future criminal investigations.

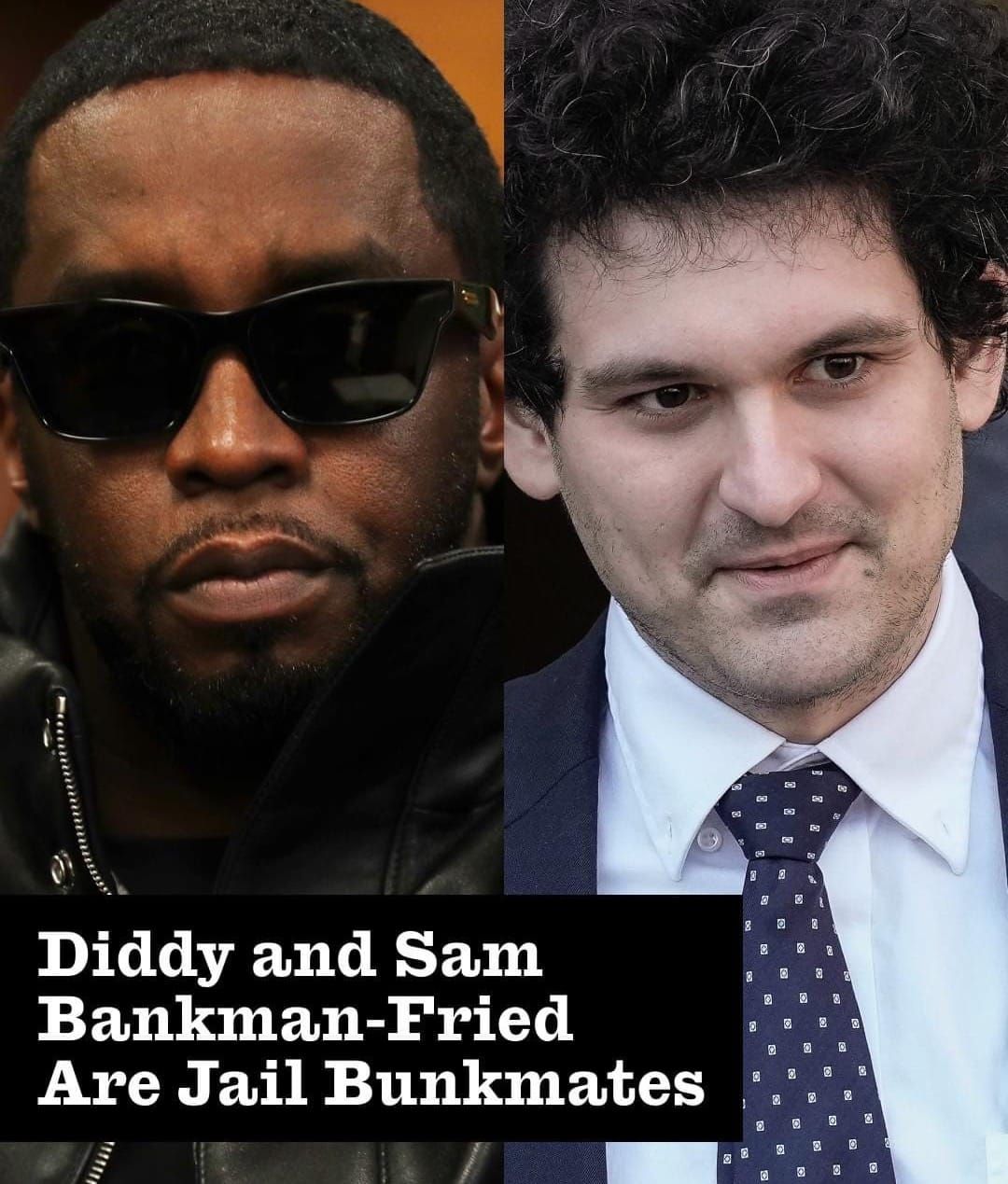

When Sean "Diddy" Combs and Sam Bankman-Fried ended up in the same Brooklyn detention facility, nobody expected AI data systems to become the unlikely hero of the story. Modern criminal investigation relies heavily on algorithmic pattern recognition, machine learning, and automated data correlation. Here's the real tech angle: investigators used advanced analytics to identify unexpected connections between cases that seemed completely unrelated on the surface. A music mogul and a crypto executive shouldn't have overlapping legal threads—but algorithms don't judge. They just find patterns.

By YEET Magazine Staff | Updated: May 13, 2026

The Metropolitan Detention Center houses thousands. That humans randomly placed these two together? Unlikely. More probable: facility management systems, intake algorithms, and case-matching software flagged commonalities in their charges and custody classifications. The "prison bromance" narrative ignores the computational work happening behind the scenes.

AI investigative tools scraped court documents, cross-referenced financial transactions, and mapped network connections across both cases. Machine learning models identified similar fraud patterns, money movement timelines, and even potential shared associates. What took detectives weeks to manually connect, algorithms did in hours. This is modern law enforcement—less coffee-fueled detective work, more GPU-powered analysis.

The real story? We're living in an era where AI doesn't just assist investigations. It shapes which suspects get scrutinized, how cases get classified, and even how facilities house inmates. Algorithms decide proximity. Algorithms suggest connections. Algorithms build the narrative.

The Tech Behind Case Correlation

When two high-profile cases intersect, it's rarely accident. Prosecutors use legal software that ingests thousands of data points: phone records, bank transfers, communication metadata, social networks. Natural language processing (NLP) algorithms scan court filings for thematic connections. Network analysis tools map relationships between people, entities, and organizations. What emerges? A web that human investigators might miss.

The FTX collapse involved blockchain analysis firms tracking crypto movements. The Diddy investigation utilized financial forensics software to trace cash flows. When both cases touched similar entities or networks, automated systems flagged them. That's not coincidence. That's computation.

Automation in Criminal Justice

This raises uncomfortable questions about data bias and algorithmic decision-making in criminal justice. If AI systems show correlation bias toward certain demographics or industries (like crypto or entertainment), does that skew investigations? Probably. Are investigators then anchored to AI-suggested narratives? Definitely.

Facility placement algorithms optimize for security, capacity, and custody level. But they also optimize based on historical data that may encode systemic bias. The "bromance" story is sexy. The real story—how automated systems categorized, classified, and co-located these individuals—is invisible.

What This Means for Future Investigations

As criminal investigation becomes increasingly automated, we're outsourcing pattern recognition to systems we barely understand. Law enforcement agencies now use predictive analytics to preempt crimes and machine learning to prioritize cases. This efficiency comes with a cost: opacity and potential amplification of existing biases.

The Diddy-SBF story illustrates how modern justice operates. It's no longer about the detective's gut instinct. It's about what the algorithm surfaces, what the system flags, and what data correlation suggests.

Common Questions About AI in Criminal Investigation

How do algorithms detect financial fraud across cases? Machine learning models analyze transaction patterns, identifying anomalies and unusual money movement behaviors. Systems correlate timing, amounts, and recipient data across thousands of transactions simultaneously—something humans can't do at scale.

Can AI bias affect how cases are investigated? Yes. If training data reflects historical policing patterns that over-target certain communities, algorithms perpetuate those biases. Garbage in, garbage out. The system learns what it's taught.

Do facilities really use algorithms for cell placement? Most modern detention centers use automated management systems for housing assignments. These consider security level, custody classification, and behavioral flags—but also utilization data that might encode historical bias.

How accurate is network analysis in finding criminal connections? Network analysis is powerful but probabilistic. It identifies possible connections; humans still decide whether those connections are meaningful. False positives are common, especially when dealing with interconnected industries like finance and entertainment.

What's the future of AI-assisted investigations? Expect more automated case linking, predictive prosecution, and algorithmic prioritization. As data collection expands, so does investigative capability—but so does the risk of technological overreach.

Related Reading on Yeet Magazine:

How AI Bias Is Reshaping the Criminal Justice System — Explore the darker side of algorithmic decision-making in law enforcement.

Machine Learning and Fraud Detection: The New Frontier of Financial Investigation — Understand how AI catches white-collar crime.

Data Privacy vs. Law Enforcement: The Algorithmic Battleground — Why AI-powered investigations raise serious civil liberties concerns.

Is the Detective Dead? How Automation Is Replacing Human Investigation — The real question: who investigates the algorithms?

Updated 0439 GMT | YEET MAGAZINE TECH DESK