How AI Performance Metrics Failed to Value a 27-Year Burger King Employee

Burger King's algorithmic approach to employee rewards stripped a 27-year veteran of meaningful recognition. This case exposes how companies automate away gratitude—and why it backfires when algorithms replace human judgment.

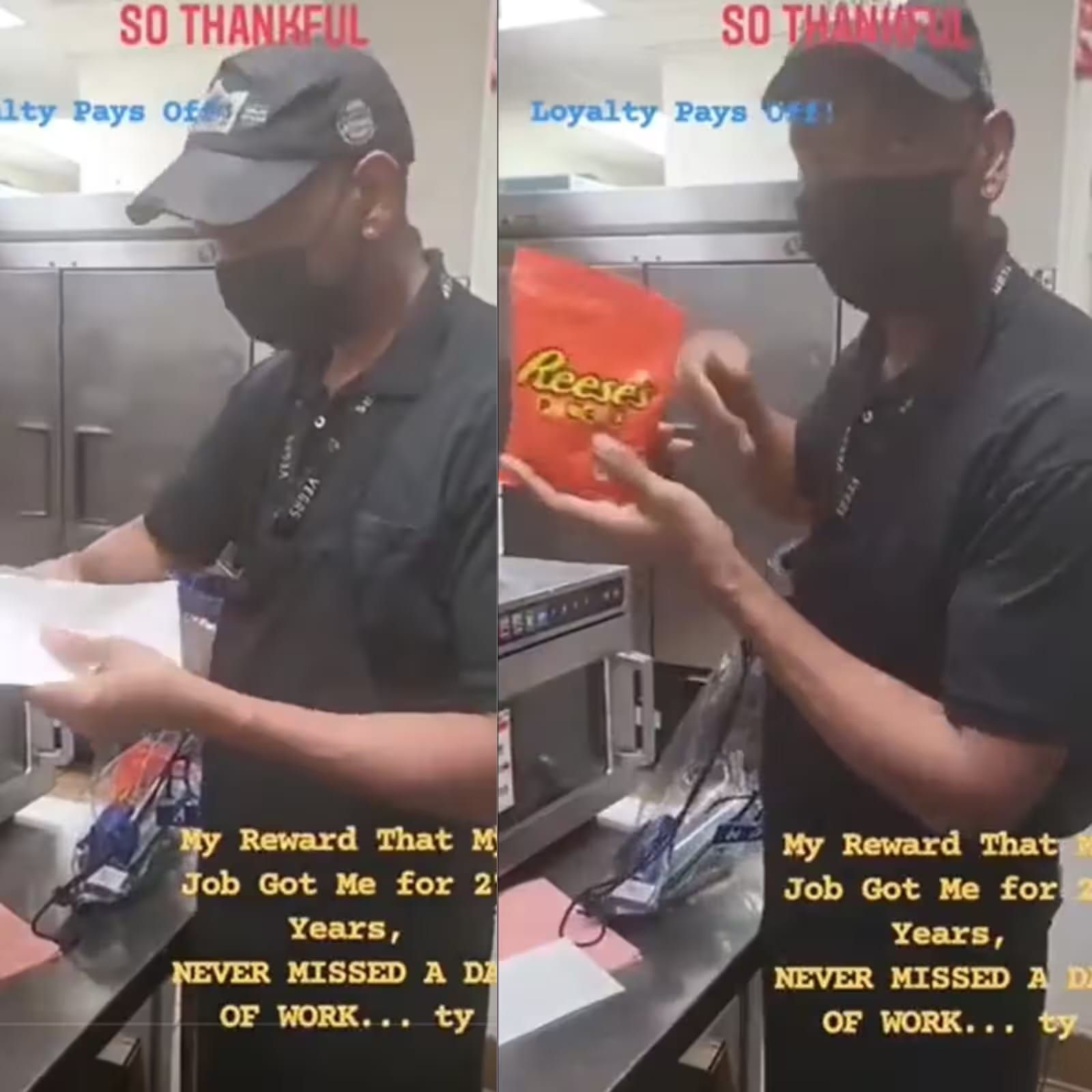

Burger King's employee recognition system failed a 54-year-old cook after 27 years of service. Instead of meaningful compensation, Kevin Ford received a generic goodie bag—likely pulled from an automated rewards algorithm that treats tenure like any other short-term performance metric. This isn't just bad management; it's a glimpse into how AI-driven HR systems are systematically devaluing loyalty. When machines calculate employee worth, decades of dedication get the same algorithm treatment as a quarterly sales spike.

By YEET Magazine Staff | Updated: May 13, 2026

Kevin Ford worked at the Las Vegas airport Burger King location for 27 years. He showed up. He did the job. He expected something that reflected that commitment. Instead, he got a bag of stuff that looked like it came from a corporate clearance bin.

Here's the kicker: before automation took over HR decisions, employees received actual money for anniversaries. Then algorithms arrived, optimized for cost reduction, and suddenly loyalty became just another data point. The system probably flagged Kevin as "low-impact" or "standard performer" and auto-assigned him a tier-2 reward from a pre-built matrix.

The viral video exposed something deeper than one company's tone-deafness. It revealed how automated recognition systems lack the basic human capacity to understand context. A 27-year employee isn't data. But to an algorithm, he's a line in a spreadsheet.

Burger King claimed the video showed a "peer-to-peer reward for short-term positive performance." Translation: they justified algorithmic mediocrity by pretending the goodie bag was never supposed to honor his actual tenure. The distinction matters. It shows a company hiding behind automation instead of owning a decision.

Kevin's daughter launched a GoFundMe that raised $250k+. Strangers saw what the algorithm missed: a person worth valuing. That's what happens when you outsource gratitude to machines.

The real story isn't about a bad goodie bag. It's about how HR automation bias systematically undervalues long-term employees. Companies implement AI-driven rewards to cut costs and improve "efficiency," but they end up communicating the opposite of what they intend: "You don't matter enough for human judgment."

Employee retention is already fragile. When automated systems handle recognition, companies signal that loyalty is worth less than the algorithm's optimization target. Kevin stayed 27 years. The algorithm gave him a bag. Now he's famous on the internet, and Burger King looks cheap.

This is why companies that keep humans in the loop on recognition decisions outperform those that fully automate. Algorithms are great at scaling, awful at mattering.

What could Burger King have done? Built recognition systems with tenure-based thresholds that trigger human review. At 5 years, 10 years, 25 years—an actual person reviews the file and signs off. It costs more upfront but prevents viral disaster and actually retains people.

The bigger picture: We're deploying AI across HR at scale without asking whether algorithms can make judgment calls that require empathy. Employee recognition is one of those decisions that shouldn't be fully automated. Neither is firing, promotions, or compensation reviews. Yet companies do it every day because the automation layer is cheap.

What happens next? Other companies will either learn from this or ignore it. Those that ignore it will keep wondering why their retention numbers tank and why employees feel disposable. Those that learn will re-introduce human judgment at critical moments—and their employees will actually stick around.

Q: Do automated HR systems always devalue employees?

Not inherently. The problem is optimization targets. If an algorithm is optimized for cost-cutting, loyalty loses. If it's optimized for retention and satisfaction, it might actually work. Most companies choose the former.

Q: What's the solution—scrap AI in HR entirely?

No. Use AI for what it's good at: processing data, identifying patterns, flagging edge cases for human review. Don't use it for final decisions on recognition, firing, or compensation without human sign-off.

Q: Could this have been avoided with better data?

Yes. If the system had a simple rule—"employees with 25+ years tenure = elevated recognition tier requiring manager approval"—the algorithm would've caught the edge case. But that costs slightly more, so most companies skip it.

Q: Why does this matter for the future of work?

Because we're building a workforce where machines make decisions that affect people's lives and morale. If those machines aren't designed with human values baked in, we get Kevin's goodie bag instead of Kevin's gratitude.

Read more on how automation bias undermines workplace culture and why AI-first HR strategies backfire on retention.