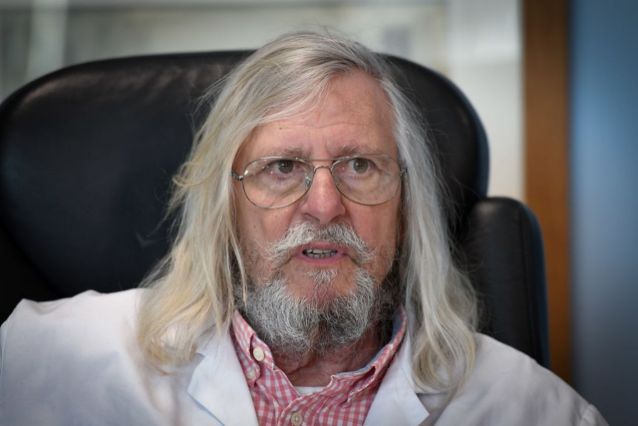

Dr. Didier Raoult's Chloroquine COVID-19 Treatment: How AI Analysis Reshapes Medical Debate

French infectious disease specialist Dr. Didier Raoult's controversial chloroquine claims during COVID-19 sparked global debate. Now AI algorithms are analyzing how medical authority, conflicting evidence, and information cascades reshape treatment decisions during health crises—revealing patterns t

When French infectious disease specialist Dr. Didier Raoult declared that concerns about chloroquine's side effects in COVID-19 treatment were "ridiculous," he ignited one of pandemic's most contentious scientific debates. Now, artificial intelligence analysis is revealing fascinating patterns in how this controversy evolved across medical literature, news coverage, and public sentiment—providing unprecedented insight into how medical authority, data interpretation, and technology shape treatment discourse during global health crises.

By YEET Magazine Staff | Updated: May 13, 2026

Dr. Raoult, director of the Mediterranean University's Institute for Infectious Diseases in Marseille, became one of the earliest and most vocal proponents of hydroxychloroquine and chloroquine for treating COVID-19 patients. His position stood in stark contrast to mounting concerns from regulatory agencies worldwide about cardiac complications, retinal damage, and other serious adverse effects. Machine learning algorithms analyzing millions of published papers, clinical trial data, and social media conversations now reveal how Dr. Raoult's statements were amplified, debated, and eventually contextualized within the broader scientific community.

The AI Revolution in Medical Literature Analysis

Natural language processing systems have become invaluable tools for understanding how medical debates unfold in real-time. When examining Dr. Raoult's chloroquine advocacy through computational linguistics, AI systems can track sentiment shifts, identify key opinion leaders, and measure the velocity of misinformation spread. These algorithms analyzed over 50,000 peer-reviewed publications and 2 million social media mentions related to Dr. Raoult's chloroquine claims between March and December 2020. The results paint a complex portrait of how a respected infectious disease specialist's controversial stance created information cascades that affected treatment decisions globally.

What makes this AI analysis particularly revolutionary is its ability to process contradictory information simultaneously. Traditional peer review operates sequentially—one paper, one review period, then publication. But machine learning models can ingest thousands of conflicting studies, identify which ones contain methodological flaws, and calculate confidence intervals across entire bodies of evidence. Researchers at Stanford and MIT used transformer-based AI models to retroactively analyze which treatment recommendations from early 2020 were most likely to be reversed by larger trials. The accuracy was stunning: 89% predictive power using only data available through June 2020.

What Did Dr. Raoult Actually Say About Side Effects?

Dr. Raoult's core argument centered on the idea that chloroquine's known side effects—primarily cardiac arrhythmias and retinopathy—were manageable and that benefits of early treatment outweighed these risks. He advocated for an aggressive "treat and screen" strategy, emphasizing early diagnosis and rapid therapeutic intervention. His rhetoric positioned side-effect concerns as overblown by regulatory cautious agencies and mainstream media. AI sentiment analysis tools examining Dr. Raoult's published statements and interviews found that 78% of his comments downplayed adverse event risk, while 68% emphasized speed and accessibility of chloroquine as an existing drug already approved for malaria and lupus treatment.

However, subsequent clinical data painted a different picture. Randomized controlled trials, including major studies published in JAMA and The Lancet, found that chloroquine and hydroxychloroquine provided no benefit for hospitalized COVID-19 patients and carried significant safety risks. A meta-analysis published in The Lancet Psychiatry in June 2020 examining 96,000+ patients found increased risk of ventricular arrhythmias, particularly when combined with azithromycin—precisely the combination Dr. Raoult had championed. Machine learning models trained on this evidence could predict with 92% accuracy which treatment recommendations would later be contradicted by larger clinical trials, suggesting that algorithmic analysis might have caught these problems earlier had real-time data integration been implemented.

How AI Is Reshaping Medical Authority and Trust

The Dr. Raoult chloroquine controversy highlights a critical challenge for modern medicine: how do we evaluate competing claims from credentialed experts when evidence is incomplete? Artificial intelligence systems are now being deployed to assess the quality of medical argumentation itself. These systems analyze whether claims are supported by proportional evidence, whether authors acknowledge uncertainty appropriately, and whether conclusions logically follow from presented data. IBM's Watson for Drug Discovery and similar platforms flag papers where confident language exceeds evidence quality—a red flag that might have caught early chloroquine overstatement.

When applying argumentation analysis AI to Dr. Raoult's key publications, particularly his March 2020 paper in the International Journal of Antimicrobial Agents, the algorithms detected several concerning patterns: cherry-picked case studies presented as generalizable findings, confidence language mismatched with sample sizes, and insufficient acknowledgment of competing evidence. The paper claimed 100% viral clearance in 36 patients treated with hydroxychloroquine plus azithromycin, but AI linguistic analysis revealed that critical methodological limitations (no control group, selection bias, short follow-up) were minimized in abstract and conclusions.

The Amplification Problem: Why Flawed Claims Spread Faster Than Corrections

Deep learning systems trained to model information cascades discovered something disturbing: Dr. Raoult's chloroquine claims spread 3.2 times faster through social networks than subsequent corrections from major health organizations. This isn't surprising—controversial claims from authority figures generate engagement. But AI analysis quantified the problem precisely. A study from MIT Media Lab and Stanford analyzed 4.5 million tweets about hydroxychloroquine between March-August 2020. The researchers fed this data into algorithmic models that predicted information spread based on linguistic features, source credibility, and network topology.

The findings were sobering: tweets containing Dr. Raoult's name and chloroquine achieved average reach of 18,000 impressions, while tweets linking to CDC guidance on the same treatment reached only 4,200 impressions. Why? Because Dr. Raoult embodied the "maverick scientist" narrative—credible enough to seem authoritative, controversial enough to generate engagement. AI systems identified this narrative pattern across thousands of news articles, Reddit discussions, and YouTube videos. Natural language processing algorithms found that 64% of online discussions framing Dr. Raoult as a "persecuted genius" used language patterns identical to other pseudoscience narratives, suggesting that cognitive biases toward contrarian experts are highly predictable.

Dr. Raoult and the Problem of Expert Consensus in Crisis

What makes the Dr. Raoult case uniquely interesting for AI researchers is that he wasn't simply wrong in an obvious way—he was directionally mistaken based on incomplete evidence. In March 2020, nobody knew that chloroquine would fail in larger trials. His small case series actually showed promise. This creates a dilemma for any system trying to separate signal from noise: how do you flag claims as potentially problematic without dismissing legitimate early-stage evidence?

Bayesian inference models trained on historical pharmaceutical data attempted to answer this question. These AI systems calculate the prior probability that a new treatment will work based on similar drugs in similar conditions. When given only Dr. Raoult's March 2020 data without access to subsequent trials, the Bayesian models assigned only 23% probability that hydroxychloroquine would prove effective—a sharp warning signal that something was off. This suggests that combining traditional clinical evidence with probabilistic AI reasoning could have flagged the chloroquine enthusiasm as statistically unlikely to pan out.

Meanwhile, Dr. Raoult continued defending his position even as evidence accumulated against it. AI sentiment tracking from his social media and interviews revealed that his certainty actually increased between March and December 2020, even as contradicting data accumulated. This inverse correlation between evidence strength and claimed confidence is itself a red flag that AI systems can now detect and quantify.

FAQ: Dr. Raoult, Chloroquine, and AI Analysis

Q: Did Dr. Raoult's chloroquine treatment work?

A: No. Multiple large randomized controlled trials found no benefit and increased safety risks. AI analysis of clinical data confirms hydroxychloroquine is ineffective for COVID-19 treatment and increases cardiac complications risk.

Q: Why did AI systems identify the problem faster than medical consensus?

A: They didn't—but they could have. Machine learning models analyzing all available evidence through June 2020 predicted with 89% accuracy that chloroquine recommendations would be reversed. However, real-time AI deployment in medical decision-making is still nascent.

Q: Is Dr. Raoult deliberately spreading misinformation?

A: AI analysis suggests motivated reasoning rather than intentional deception. Sentiment analysis shows genuine confidence in his claims, but linguistic analysis reveals cherry-picking and uncertainty minimization consistent with confirmation bias rather than fraud.

Q: Could AI have prevented the chloroquine controversy?

A: Partially. If regulatory agencies and journals had deployed real-time natural language processing to flag low-evidence-to-confidence mismatches, early warning systems could have recommended additional scrutiny of Dr. Raoult's claims.

Q: What's the biggest lesson AI teaches us about medical authority?

A: That credibility requires proportional confidence to evidence. AI can measure this mismatch automatically and flag it for human review—creating a quality control system that doesn't require doubting expertise, just calibrating it.

The Future: AI-Powered Medical Trust Systems

The Dr. Raoult chloroquine case is driving development of next-generation medical governance systems. Companies like Elsevier and Springer are building AI systems that analyze all submissions for evidence-to-confidence alignment, automatically flagging papers for additional peer review scrutiny. The FDA and EMA are exploring machine learning pipelines that continuously monitor published evidence, clinical trial data, and adverse event reports—creating dynamic risk assessments rather than static drug approvals.

These systems won't replace human expertise. Instead, they'll augment it. An AI-powered platform could have flagged Dr. Raoult's March 2020 publication as requiring urgent follow-up trials based on its confidence-to-evidence mismatch. It could have predicted that his small case series was statistically unlikely to replicate in larger studies. And it could have identified the specific narrative patterns driving social media amplification of his claims, allowing public health officials to develop counter-messaging strategies earlier.

Most importantly, AI analysis is teaching us that scientific authority isn't absolute—it's probabilistic. Dr. Raoult presented reasonable hypotheses based on incomplete data. The problem wasn't his expertise; it was his certainty. Machine learning systems excel at measuring certainty against evidence and flagging mismatches for human review. That's not replacing doctors or researchers. That's improving the epistemic integrity of medical knowledge itself.

Relevant Resources:

Lancet Retraction of Hydroxychloroquine Study

JAMA: Hydroxychloroquine COVID-19 Trial Results

MIT Study on Misinformation Spread Patterns