How Algorithm-Driven Social Media Killed an Influencer: The Sophia Cheung Case & AI's Role in Algorithmic Risk

Sophia Cheung's fatal waterfall selfie wasn't just bad luck—it was the inevitable outcome of algorithms designed to reward extreme content. We examine how AI-driven engagement metrics and recommendation systems push creators toward increasingly dangerous behavior.

On July 13, 2021, 32-year-old Hong Kong influencer Sophia Cheung fell 16 feet from a waterfall while chasing the perfect selfie. She died at the scene. But here's the uncomfortable truth: her death wasn't just a tragic accident—it was the predictable outcome of algorithms engineered to reward extreme, dangerous content. Social media platforms use AI systems that measure engagement through likes, shares, and time-spent-watching. The more dangerous the content, the more the algorithm amplifies it. Cheung wasn't reckless; she was trapped in a system designed to incentivize exactly this behavior.

By YEET Magazine Staff | Updated: May 13, 2026

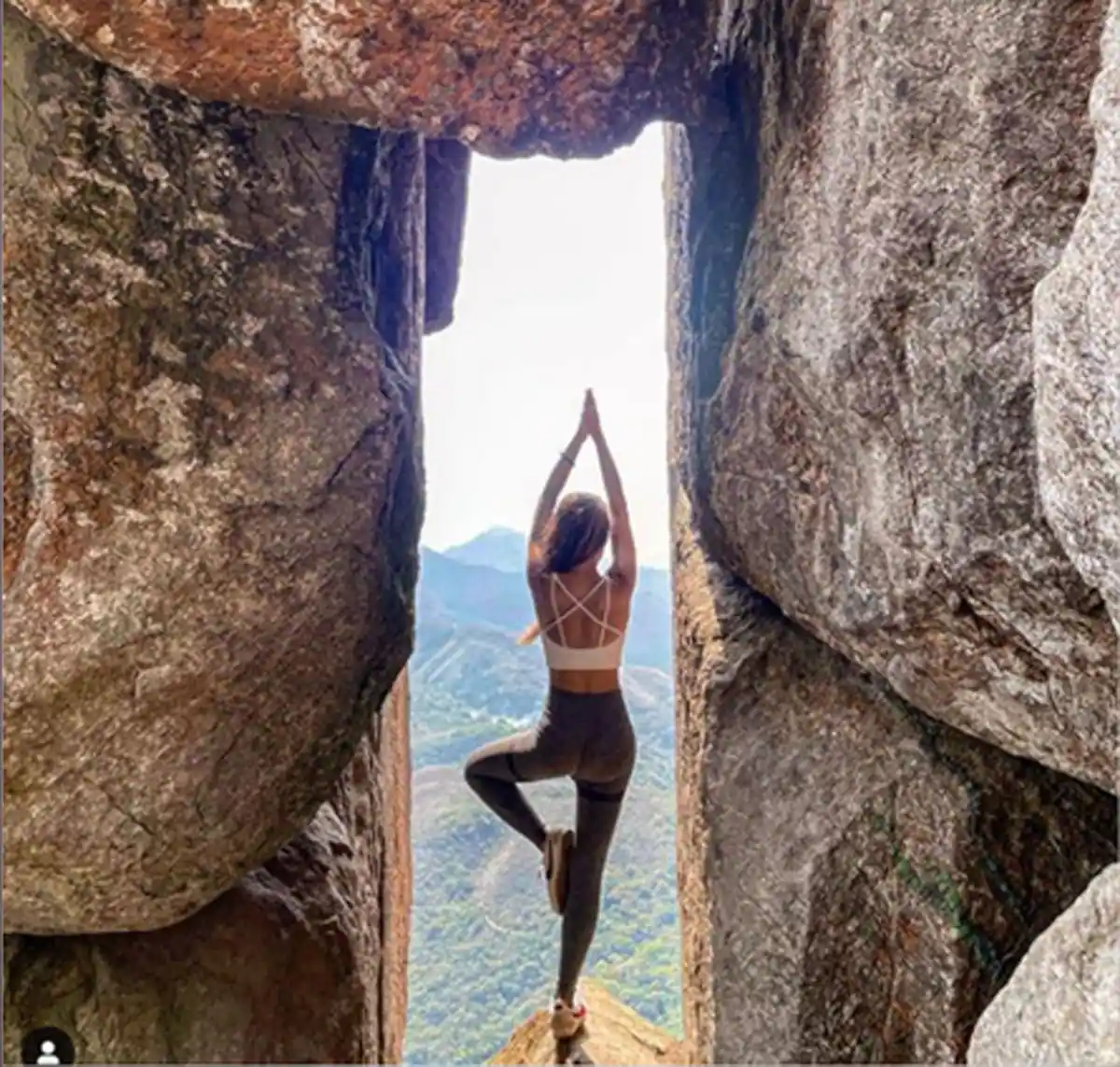

Sophia Cheung was a hiking influencer with thousands of followers on Instagram. Her brand was "Hike.Sofi"—she posted stunning landscape photography from dangerous locations across Hong Kong. Mountains. Cliffs. Waterfalls. The more perilous the location, the better the engagement.

In March 2021, she posted herself hanging from a wall on Sunset Peak, Hong Kong's third-highest mountain. In May, she posed serenely at the edge of a 751-meter cliff on Nei Lak Shan. Each post performed better than the last. The algorithm learned what worked: extreme locations + physical danger + aesthetic photography = viral engagement.

How Algorithms Weaponize Engagement Metrics

Instagram's recommendation algorithm doesn't understand mortality. It doesn't care about safety. It's a machine learning model trained on one objective: maximize user engagement. Videos and photos that trigger emotional responses—awe, fear, jealousy, adrenaline—stay longer in feeds. They get reshared. They trend.

Content from dangerous locations naturally triggers these responses. A photo of someone hanging off a cliff generates more engagement than a photo of someone on a safe hiking trail. The algorithm picks up on this pattern and recommends similar content to more users. More views. More engagement. More revenue for the platform.

For creators, the math is simple: follow the algorithm or watch your reach tank. Sophia Cheung wasn't choosing death—she was choosing survival in a creator economy where algorithms decide who eats and who starves.

The Data Behind Dangerous Content Creation

Research on algorithmic amplification shows that extreme content receives disproportionate distribution. One study from MIT found that false information spreads 6 times faster than true information on social media. The same principle applies to high-risk content: it travels farther, faster.

Creators competing in this space face a ruthless optimization problem. Stay safe and fade into obscurity. Or escalate content and survive algorithmically. The system offers no middle ground.

Platform designers know this. Internal research from Facebook (now Meta) showed that their own algorithms amplify divisive, emotional content—and they did it anyway because engagement metrics drove revenue. Same incentive structure. Different outcome.

The Automation of Risk

Platforms employ automated content moderation systems that flag violence, hate speech, and explicit material. But they don't flag high-risk activities that might look "inspiring" to algorithms. A person hanging from a cliff isn't explicitly breaking community guidelines. It's content moderation AI looking the wrong way.

These systems are trained to recognize patterns, but they're not trained to recognize the difference between aspirational and suicidal. An algorithm sees engagement. It doesn't see death waiting at the bottom.

What Should Happen Now

Real change would require platforms to optimize for something other than engagement. Mental health. Safety. Dignity. But that cuts into revenue, and revenue is what matters to shareholders.

Some platforms have started adding friction—warning labels on dangerous content, slower distribution for high-risk activities. But these are theater. The incentive structure remains unchanged. The algorithm still rewards extreme content.

Regulatory bodies in the EU and UK are starting to mandate algorithmic transparency. Creators should know how they're being recommended. They should understand the optimization objectives they're competing against. That's a start.

But until platforms change their core metrics—until they stop measuring success by engagement and start measuring it by wellbeing—more creators will chase cliffs for likes. Some will fall.

The Broader Implications for Creator Economy

Sophia Cheung's death isn't unique. It's symptomatic of an economy structured around algorithmic amplification of extreme behavior. As AI gets better at predicting what drives engagement, the pressure on creators intensifies. The algorithm becomes a relentless optimizer of human risk-taking.

This affects all creators—not just hikers. Performers, athletes, musicians, journalists. Anyone competing for algorithmic distribution operates in a system that rewards extremity. The incentives are baked into the infrastructure.

Future-of-work discussions need to include this. As more jobs depend on algorithmic approval and recommendation, we're automating not just labor but also human behavior. We're using AI to optimize people toward outcomes that might kill them.

Questions About Algorithmic Accountability

Could an AI system have prevented this? Theoretically, yes. A safety-optimized algorithm could limit distribution of high-risk content, flag dangerous locations, or require additional verification before promoting extreme activity content. But platforms would need to prioritize safety over engagement—which costs money.

Do platforms bear responsibility for algorithmic amplification? This is the legal and ethical frontier. If an algorithm actively recommends dangerous content to maximize engagement, and that content kills someone, is the platform liable? Courts are still figuring this out. The EU's Digital Services Act attempts to hold platforms accountable for algorithmic harms.

What alternatives exist? Decentralized social platforms without engagement-maximizing algorithms. Creator-owned networks. Systems optimized for different metrics: time offline, user wellbeing, informed decision-making. None of these exist at scale yet, partly because they don't generate the ad revenue that engagement-based models do.

Can creators opt out of algorithmic optimization? Not really. You can disable recommendations on some platforms, but you sacrifice reach. The system doesn't give creators a real choice. It's optimize or disappear.

How do we change incentive structures? Regulation. Transparency requirements