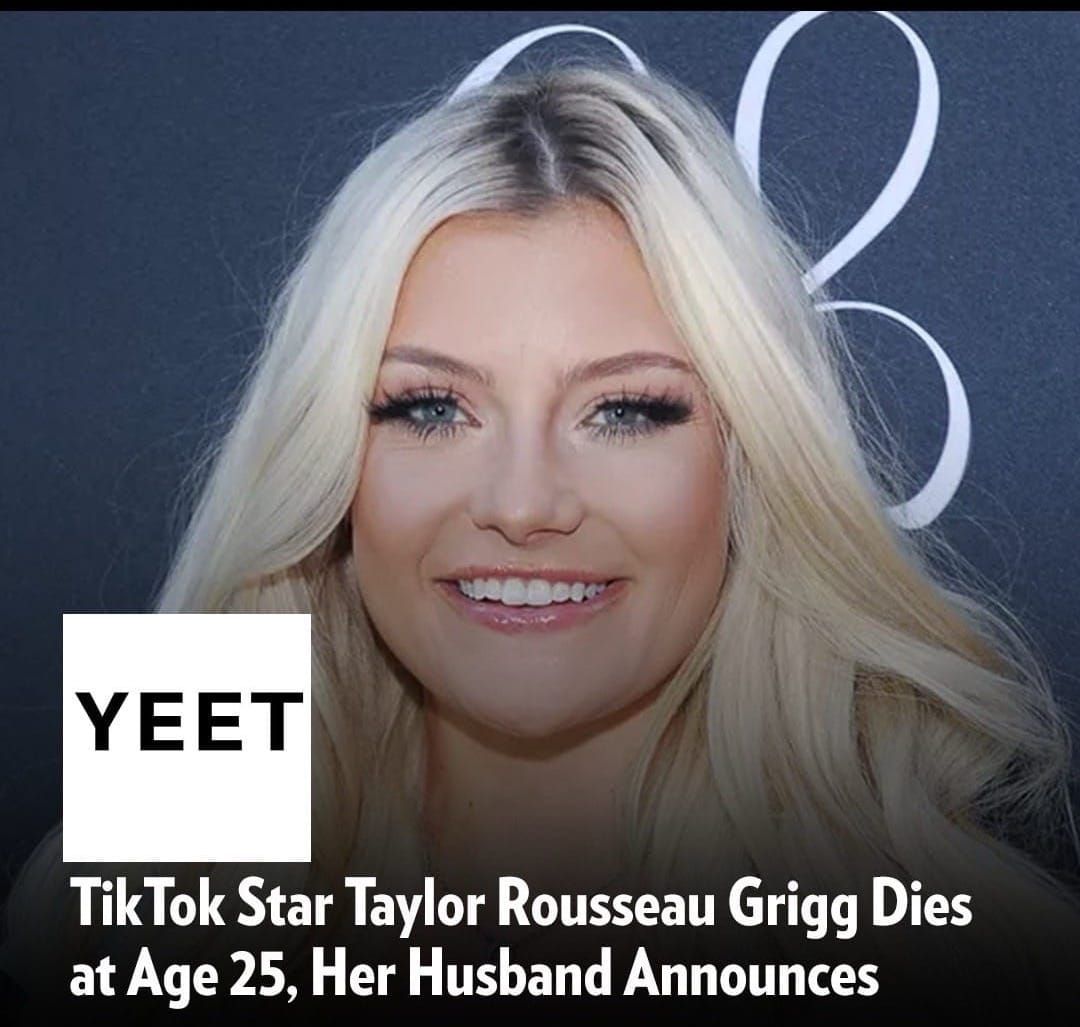

Taylor Rousseau Grigg + AI: How Algorithm-Driven Fame May Have Hidden Health Crisis

Taylor Rousseau Grigg's tragic death at 25 exposed a darker side of algorithmic fame. We explore how AI-driven content curation may have masked her health struggles and what her story reveals about influencer culture in 2024.

Taylor Rousseau Grigg + AI: How Algorithm-Driven Fame May Have Hidden a Health Crisis

When TikTok sensation Taylor Rousseau Grigg passed away in October 2024 at just 25 years old, the social media world reeled. But beneath the shock lay an uncomfortable truth: AI algorithms had weaponized her authenticity, turning her raw vulnerability into viral content while potentially masking the severity of her private health battles.

The Algorithm's Perfect Storm

Taylor's 1.4 million followers loved her candid approach. She shared personal moments, health struggles, and unfiltered thoughts—exactly the type of content that TikTok's AI recommendation engine rewards. The platform's algorithm prioritizes engagement, and nothing drives engagement like emotional vulnerability. But here's where AI created a dangerous feedback loop: the more Taylor shared about her pain, the more the algorithm amplified it, creating an echo chamber where her suffering became her brand.

AI systems like TikTok's don't understand nuance. They see "health struggle content" as high-engagement material and push it relentlessly. This means creators face constant pressure to escalate their personal crises for visibility. For someone like Taylor, battling undisclosed health issues, this algorithmic incentive structure may have accelerated a crisis that should have remained private.

What Did the Algorithm Miss?

Machine learning models train on patterns, but they're notoriously bad at detecting human suffering. TikTok's content moderation AI can flag explicit content, but it cannot identify when a creator is genuinely in danger. Taylor's final videos showed signs of distress that human moderators might have caught—but AI alone cannot interpret context, empathy, or desperation.

The platform's safety systems are reactive, not predictive. They respond to reported content, not to creators who need help. In Taylor's case, no algorithm sent a wellness check. No AI flagged her account for creator support resources. The same technology that made her famous proved powerless to protect her.

FAQ: AI, Algorithms, and Creator Safety

Q: Did TikTok's AI system contribute to Taylor Rousseau Grigg's death?

A: While no direct causation can be proven, the algorithmic incentive to share increasingly personal content likely intensified pressure on creators with health struggles. TikTok's recommendation system rewards vulnerability, creating unsustainable expectations for authenticity.

Q: How are social platforms using AI to protect creators?

A: Platforms are developing predictive models to identify at-risk creators, but these systems remain experimental and face accuracy challenges. Most AI safety measures focus on content moderation rather than creator welfare.

Q: Could AI have detected warning signs in Taylor's content?

A: Advanced sentiment analysis and behavioral AI could potentially flag concerning patterns, but most platforms don't prioritize this. Privacy concerns and liability issues keep platforms from intervening in creators' personal lives.

Q: What responsibility do platforms have for algorithmic harm?

A: This remains legally and ethically contested. While platforms aren't legally liable for user behavior, critics argue that algorithms designed to maximize engagement without safety guardrails constitute a form of negligence.

Q: Are there AI tools that could help creators like Taylor?

A: Yes. Sentiment analysis, mood detection, and wellness-check AI could be integrated into platforms. However, implementation requires significant investment and regulatory clarity that doesn't currently exist.

The Broader Impact: AI and Creator Culture

Taylor's death isn't an isolated tragedy—it's a symptom of a larger systemic problem where AI prioritizes engagement over wellbeing. The algorithms governing TikTok, Instagram, YouTube, and other platforms are trained on one metric: user retention and watch time. Creator health isn't a variable in that equation.

Machine learning models are morally neutral. They don't intend harm. But when profit incentives align with algorithmic optimization, the human cost becomes invisible. Taylor posted content because the algorithm rewarded it. The algorithm rewarded it because engagement drives ad revenue. Somewhere in that loop, a person's suffering became a product.

What Comes Next?

Taylor's death should force platforms to reconsider their approach to creator safety. This means:

- Implementing AI wellness monitoring—flagging creators showing patterns of distress

- Removing algorithm incentives for vulnerability content—deprioritizing mental health disclosures to prevent performative suffering

- Mandatory creator support resources—AI-powered mental health chatbots and crisis intervention databases

- Transparency in algorithmic amplification—letting creators know when content is being algorithmically promoted

- Regulatory oversight—holding platforms accountable for algorithmic harms to creators

The Uncomfortable Truth

Taylor Rousseau Grigg was a victim of her own authenticity—but more fundamentally, she was a victim of AI systems designed without safety guardrails. Her death exposes the dark side of algorithmic capitalism: when machines decide what deserves visibility, they often amplify exactly what should be hidden.

The influencer economy thrives on the promise that authenticity equals success. AI algorithms ensure that promise is kept—right up until the moment it kills you. Until platforms fundamentally redesign their systems to prioritize creator safety over engagement metrics, more creators will face the same invisible pressure that may have contributed to Taylor's death.

Her legacy shouldn't just be remembered. It should be a wake-up call that without ethical AI governance, the most vulnerable creators will always be the most exploited.

Published October 2024 | Yeet Magazine