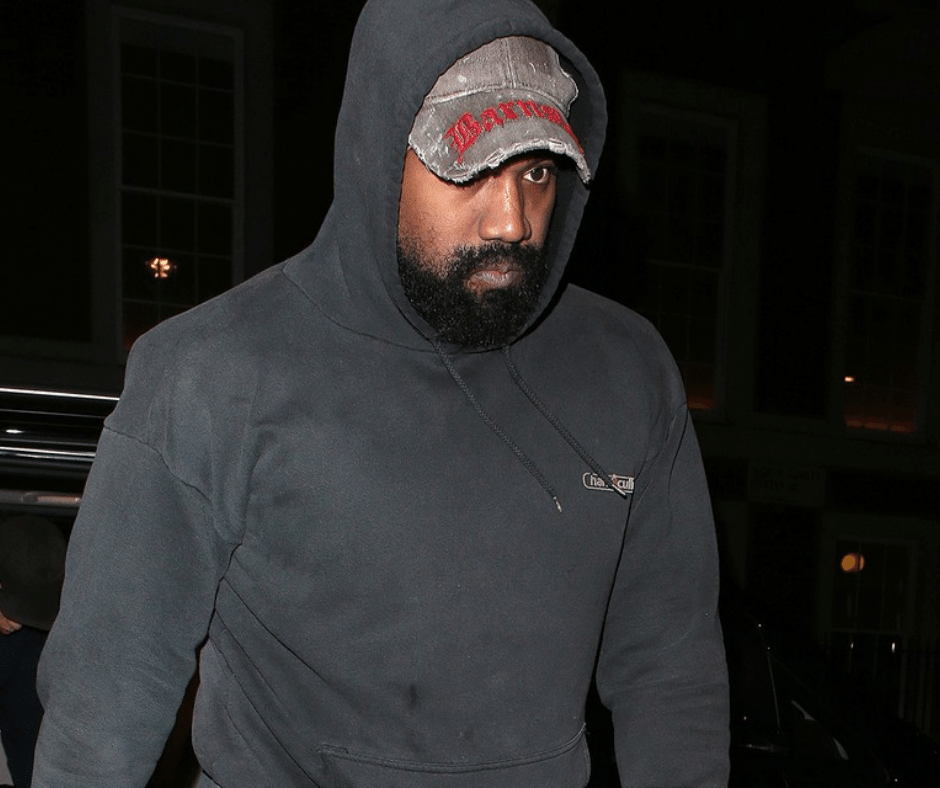

How AI Content Moderation Failed to Stop Kanye West's $2B Brand Collapse

Kanye West's $2 billion loss in a single day exposed major gaps in AI-powered content moderation systems. While platforms like Twitter and Instagram eventually suspended him, the delays revealed how algorithms struggle with real-time reputation threats and brand protection.

By YEET MAGAZINE | Updated 0339 GMT (1239 HKT) October 3, 2022

By YEET Magazine Staff | Updated: May 13, 2026

Kanye West's $2 billion evaporation in one day should terrify corporate risk teams everywhere. When Adidas and Gap severed ties following anti-Semitic remarks, it wasn't instant. AI content moderation systems at Twitter and Instagram caught up only after the damage cascaded. This reveals a critical failure: automated systems and human oversight lag behind real-time reputational crises, especially for high-profile figures whose posts reach millions before algorithmic flags kick in.

Here's what actually happened. West posted inflammatory content that violated platform policies, but the delay between posts, virality, and enforcement gave brands just enough time to watch their partnerships burn. Adidas—which generated roughly $2 billion in annual revenue from Yeezy products—announced an "immediate end" to their partnership. Gap followed hours later. Balenciaga had already exited weeks prior.

West responded on Instagram: "I lost two billion dollars in one day, and I'm still alive." The post itself became a case study in how influencers weaponize platforms faster than moderation algorithms can respond. Twitter suspended him after he tweeted threats. Instagram temporarily blocked him. But by then, the algorithmic bell had already rung—millions saw the posts before enforcement kicked in.

This is the real problem with AI content moderation systems today. They're reactive, not predictive. Twitter's algorithm didn't flag West's content as high-risk before it went viral. Instagram didn't escalate it for human review when it first detected violations. The systems waited for user reports, then automated the enforcement—too late for brands betting billions on partnerships.

Corporate legal teams are now grappling with a new data nightmare: predictive brand risk algorithms. If AI can't reliably catch policy violations in real-time for a creator with West's profile, how do companies assess partnership risk? Some firms are now building proprietary AI systems that track creator behavior across platforms, analyzing sentiment, language patterns, and sentiment drift before public crises hit.

What the West situation proves: algorithms are tools for damage control, not prevention. Ari Emanuel's public call for brands to dump West didn't trigger any AI alert—it was human judgment. Twitter and Instagram's enforcement happened after enforcement requests from users and media. By the time automation caught up, the market had already priced in the collapse.

West claimed he suffers from bipolar disorder, which raises another automation issue: should AI systems account for mental health context when moderating? Current algorithms don't. They flag violations equally regardless of user history, medical disclosures, or extenuating circumstances. That's a feature to some (consistency), a bug to others (lack of nuance).

The broader automation angle here matters for the future of work. If AI can't protect billion-dollar partnerships in real-time, what does that mean for automated contract enforcement, algorithmic risk assessment, and data-driven HR decisions? The answer: we're still in the early phase where automation handles the obvious stuff (content removal) but misses the strategic game (predicting crises before they happen).

What this means for brands and creators:

Expect more companies to invest in proprietary AI systems that monitor creator behavior continuously. Expect contracts to include algorithmic penalty clauses triggered by sentiment analysis. Expect platforms to face pressure to implement predictive moderation, not just reactive enforcement.

Questions people actually ask:

Q: Could AI have prevented West's $2B loss?

Not entirely, but yes—if platforms implemented predictive moderation tied to brand partnerships. Real-time sentiment analysis + brand impact modeling could have flagged risk hours earlier. The technology exists. The incentives don't.

Q: Why did platforms take so long to act?

Because their moderation algorithms are trained on user reports and automated policy matching, not on business impact. Twitter's AI flagged the content as policy-violating, but no algorithm was tracking "this will destroy a $2B partnership in 24 hours." Those connections require human judgment and cross-platform data integration platforms rarely maintain.

Q: Will this change content moderation policy?

Yes. Expect higher-profile creators to face faster automated suspensions. Expect more brands to demand real-time API access to content moderation systems. Expect automation to get stricter for obvious policy violations, even if it means less nuance.

Q: What about the bipolar disorder angle?

Current AI systems don't account for disclosed mental health conditions. Should they? That's a policy question automation can't answer alone. It requires human oversight, medical ethics boards, and probably legislation.

Q: Could predictive AI systems create bias?

Absolutely. If AI models are trained to flag creators "at risk of controversial behavior" based on past posts, you're automating pre-crime surveillance. That's legally and ethically sketchy. The West case shows why brands want it, but also why it's dangerous.

Related reading: Check out our breakdown on how AI moderation algorithms actually work on social media, and our deep dive on predictive analytics transforming corporate